A Modular AI Architecture for Multi-Modal Infrastructure Inspection

Executive Summary

Global civil infrastructure, bridges, pavements, tunnels, energy transmission networks, industrial plants, and water systems, operates under compounding stress: progressive material aging, rising traffic and load demands, climate intensification, and chronically constrained maintenance budgets. Legacy inspection regimes, characterized by periodic manual visual surveys requiring lane closures, scaffolding, or rope access, are labor-intensive, subjective, inconsistently repeatable, and fundamentally incapable of scaling to meet the quantum of assets requiring continuous oversight.

This white paper presents a production-grade Modular AI Architecture for Multi-Modal Infrastructure Inspection, a five-layer enterprise framework that transforms reactive, episodic inspection into continuous, predictive, and autonomous asset health management. The architecture integrates heterogeneous sensing modalities (RGB, infrared/thermography, LiDAR, Ground-Penetrating Radar, acoustic emission, fiber Bragg grating sensors, and IMUs) deployed across UAV platforms (AI Drone Inspection), unmanned ground vehicles (Rover Inspection), and fixed IoT sensor networks. Deep learning-powered perception engines, physics-informed digital twins, and AI-driven decision support close the loop from raw sensor data to actionable maintenance work orders, fully integrated into enterprise Asset Management Systems (AMS) and Computerized Maintenance Management Systems (CMMS).

Targeting digital transformation leaders, infrastructure asset managers, and technology-commercial executives, this paper provides the technical depth, business rationale, deployment blueprints, and responsible AI governance frameworks necessary to transition from isolated AI pilots to enterprise-scale, cross-asset AI Infrastructure Inspection platforms. Reference patterns align with IBM's hybrid cloud and AI strategy, Watson AIOps principles, and the broader industry movement toward AI-first infrastructure operations.

Introduction: The Infrastructure Inspection Imperative

Infrastructure networks constitute the foundational substrate of economic productivity, public safety, and societal continuity. The American Society of Civil Engineers (ASCE) estimates that the United States alone requires over $2.6 trillion in infrastructure investment over the next decade to arrest deterioration; globally, the World Bank places the infrastructure investment gap at $15 trillion through 2040. Yet inspection, the primary mechanism for detecting deterioration before it becomes catastrophic failure, has remained largely unchanged for decades.

Traditional Infrastructure Inspection is characterized by periodic, manual visual surveys conducted by certified inspectors. These workflows require physical access (lane closures, scaffolding, rope access, boats, or aerial lifts), are labor-intensive, and introduce significant inspector-to-inspector variability. For linear assets such as power transmission lines (totaling over 7.3 million miles in the US alone) and oil and gas pipelines (over 3 million km globally), achieving comprehensive inspection coverage through manual means is logistically and economically infeasible.

The Convergence Enabling AI Infrastructure Inspection

Three mutually reinforcing technological trajectories are converging to make enterprise-scale AI Infrastructure Inspection not merely possible but operationally superior to legacy approaches:

The Problem with Point Solutions

Despite this convergence, the vast majority of current AI inspection deployments are vertical point solutions, monolithic, dataset-specific pipelines optimized for a single asset type (e.g., reinforced concrete bridge decks) and a single sensing modality (e.g., RGB images from a specific drone model). These systems are tightly coupled to particular datasets, hardware configurations, and asset operators. They cannot be transferred across asset classes, scaled across organizational portfolios, or compared using standardized evaluation protocols, severely limiting their cumulative impact.

This paper addresses this limitation by proposing a modular, five-layer AI architecture with clean abstraction boundaries, standardized inter-layer APIs, and a domain-agnostic data model. The architecture is designed for the techno-commercial leader seeking not merely a proof-of-concept, but a scalable, governable, and business-value-generating AI Infrastructure Inspection enterprise platform.

Infrastructure Asset Classes, Defect Typologies & Inspection Standards

A production AI Infrastructure Inspection platform must operate across heterogeneous asset classes, each with distinct material compositions, defect phenomenologies, inspection standards, and consequence-of-failure profiles. The following taxonomy provides the ontological foundation for the architecture's data model and perception modules.

Asset Taxonomy and Characteristic Defects

| Asset Class | Primary Defect Types | Sensing Modalities | Governing Standards |

|---|---|---|---|

| Transportation Structures (Bridges, Viaducts, Culverts) | Flexural/shear cracking, spalling, rebar corrosion, bearing degradation, joint distress, scour, delamination | RGB (surface), IR (delamination, moisture), LiDAR (geometry, deflection), GPR (sub-surface, rebar depth), vibration (modal analysis) | AASHTO, FHWA SI&A, EN 1337, ISO 13822 |

| Power Transmission Lines & Substations | Insulator flashover/tracking, conductor fatigue, hardware corrosion, tower deformation, vegetation encroachment, thermal hotspots | RGB+IR (UAV aerial inspection / AI Drone Inspection), LiDAR (vegetation clearance), UV (corona discharge) | IEC 60300, NERC FAC-003, IEEE C2 (NESC) |

| Oil & Gas Pipelines | External corrosion, coating disbondment, mechanical damage, weld anomalies, leakage, soil movement | RGB+IR (aerial and rover inspection), GPR, acoustic emission, magnetic flux leakage (MFL) | ASME B31.4/B31.8, API 570, API 1163 |

| Industrial Facilities (Tanks, Pressure Vessels, Pipe Racks) | Wall thinning, cladding failure, weld cracking, vibration fatigue, fouling, process leaks | RGB, IR, UT thickness (integrated with rover inspection), acoustic emission, strain gauges | API 510/570/653, ASME PCC-2, RBI per API 580 |

| Roadway Pavement | Alligator cracking, rutting, potholes, surface raveling, joint distress (rigid pavement) | RGB (vehicle-mounted), LiDAR (rutting depth, IRI), GPR (base layer), 3D structured light | ASTM D6433 (PCI), AASHTO R 36, ISO 17450 |

Standards Compliance as an Architectural Requirement

A critical but frequently underappreciated architectural constraint is that AI inspection systems must not merely detect and classify defects, they must translate raw model predictions (pixel-wise segmentation masks, object bounding boxes, anomaly scores) into the specific condition rating formats mandated by governing standards. For example, FHWA element-level condition ratings (0–9 scale), AASHTO sufficiency ratings, or IEC 60300 reliability indices must be computed from AI outputs and presented in report formats acceptable to regulatory authorities.

This translation requirement, from model output to standardized rating, must be encoded as a first-class responsibility of the Decision Support Layer (Section 10), not as an afterthought. Failure to architect for standards compliance is the primary reason AI inspection pilots fail to achieve regulatory acceptance and operational deployment.

Core AI Inspection Task Framework & KPI Definitions

The following five canonical task categories define the complete scope of AI Infrastructure Inspection. Each task operates at a distinct spatial and temporal scale and requires specific model architectures, training data, and evaluation protocols.

| Task Category | Description & Examples | Output Representation | Primary KPIs |

|---|---|---|---|

| Component Detection | Localizing structural and mechanical elements: towers, insulators, bolts, joints, bearings, expansion joints, cables | Bounding boxes with class labels and confidence scores; geo-referenced in asset coordinate system | mAP@0.5, mAP@0.5:0.95, detection recall for safety-critical components |

| Defect Detection & Segmentation | Pixel-wise identification and quantification of cracks, spalling, corrosion, delamination, vegetation encroachment, coating failure, efflorescence | Instance segmentation masks with defect class, severity grade, width/area measurements, and calibrated uncertainty | IoU, Dice coefficient, boundary F-score (BoundF), crack width RMSE, detection rate vs. human baseline |

| Condition Rating & Anomaly Detection | Asset- and element-level health indexing aligned with regulatory standards; unsupervised or semi-supervised detection of statistical anomalies in sensor data | Scalar condition indices with uncertainty bounds; anomaly scores with flagged time segments or spatial regions | Rating concordance with certified inspector (Cohen's Kappa, RMSE), anomaly detection AUC, false positive rate |

| 3D Reconstruction & Deformation Monitoring | Photogrammetric and LiDAR-based reconstruction of asset geometry; differential analysis across inspection campaigns for deformation, settlement, or geometric change | Textured 3D meshes, dense point clouds, deformation heatmaps with mm-level precision geo-referenced to survey control | Point cloud completeness, surface normal accuracy, deformation detection threshold (mm), registration RMSE |

| Prognostics & Remaining Useful Life (RUL) | Data-driven deterioration forecasting, failure probability estimation, and remaining useful life prediction conditioned on loading, environmental, and maintenance history | Time-series RUL estimates with prediction intervals; failure probability curves; risk matrices | RMSE/MAE of RUL predictions, Brier score for failure probability, calibration (reliability diagrams) |

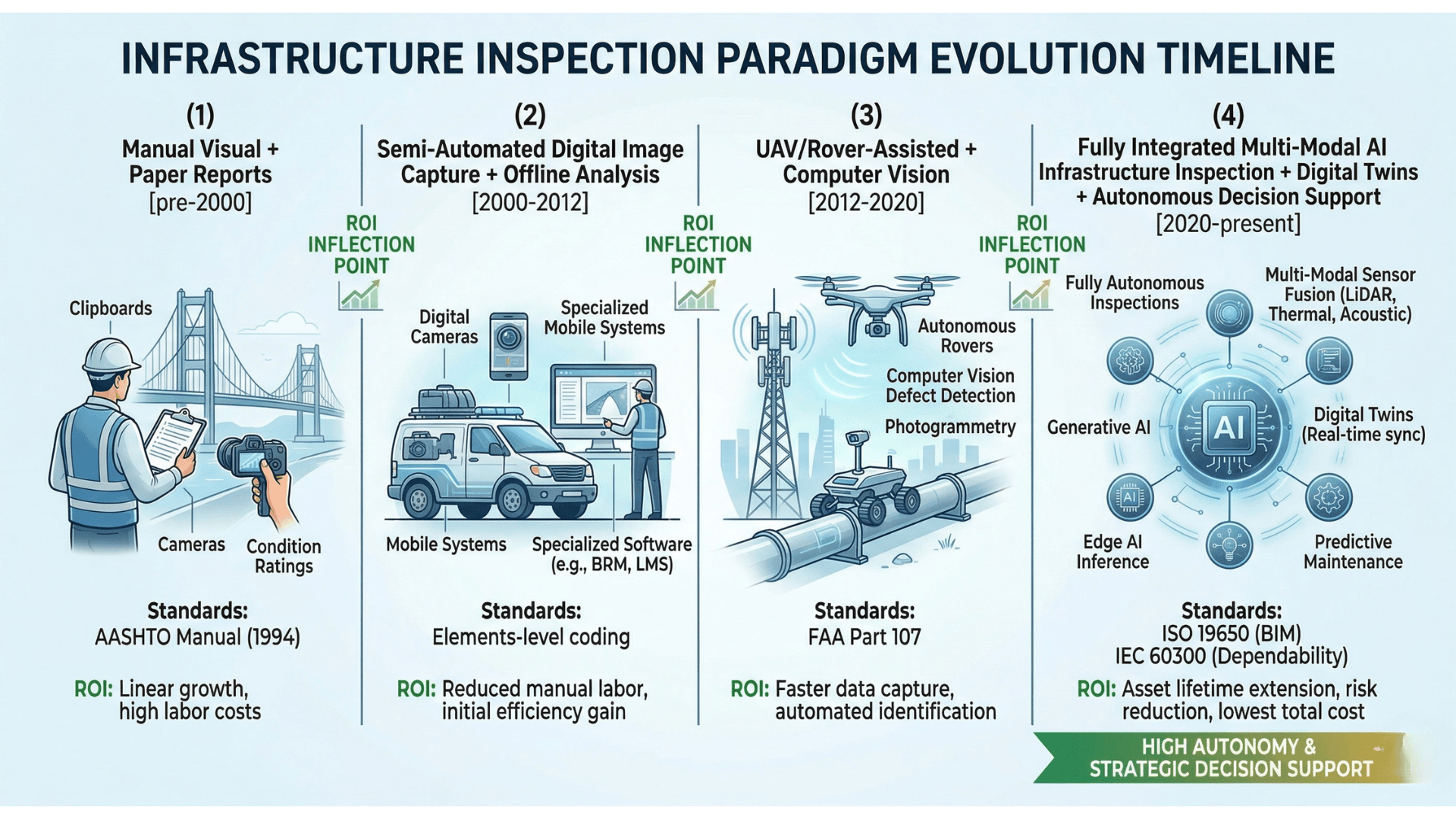

Evolution of Computer Vision for Infrastructure

Understanding the architectural evolution from handcrafted computer vision to foundation-model-powered AI Infrastructure Inspection is essential context for enterprise technology decision-makers evaluating platform maturity and investment horizon.

Generation 1: Handcrafted Feature Engineering (pre-2012)

Early automated inspection systems employed handcrafted feature descriptors, Canny edge detection, Gabor filter banks, Histogram of Oriented Gradients (HOG), local binary patterns (LBP), and Hough transforms, combined with classical machine learning classifiers (SVMs, Random Forests, Adaboost). Performance was severely constrained by sensitivity to illumination variation, viewpoint changes, surface texture variability, and shadow artifacts, endemic challenges in field inspection environments.

Generation 2: Deep CNN-Based Single-Modality Models (2012-2019)

The deep learning revolution, anchored by AlexNet (2012) and subsequently VGG, ResNet, and DenseNet architectures, transformed inspection AI. Key developments for infrastructure inspection included:

Generation 3: Multi-Modal Fusion and Transformer Architectures (2019-2023)

Vision Transformers (ViT, Swin Transformer, DeiT) introduced attention-based global context modeling, outperforming CNNs on high-resolution structural image analysis requiring long-range spatial reasoning. Hybrid CNN-Transformer architectures (e.g., TransUNet, Swin-UNet) combined local feature extraction with global context for dense prediction tasks. Multi-modal fusion architectures emerged to jointly process RGB, IR, LiDAR, and vibration data, leveraging complementary signal characteristics for superior defect detection, particularly for sub-surface anomalies inaccessible to single-modality systems.

Generation 4: Foundation Models and Integrated AI Infrastructure Platforms (2023-present)

Large vision-language foundation models (SAM, Segment Anything Model, CLIP, GPT-4V, Gemini Vision) are being adapted for zero-shot and few-shot inspection, enabling generalization across unseen defect types and asset classes without full retraining. Physics-informed neural networks (PINNs) integrate conservation laws and structural mechanics constraints directly into the learning objective, improving extrapolation to loading scenarios not present in training data. IBM's Granite foundation models and watsonx platform represent enterprise-grade examples of this pattern, general-purpose AI adapted for domain-specific industrial applications through parameter-efficient fine-tuning.

Modular AI Architecture: Five-Layer Reference Model

The proposed architecture decouples the five functional domains of AI Infrastructure Inspection into independently deployable, horizontally scalable layers. Each layer exposes standardized APIs and data schemas, enabling mix-and-match composition of best-of-breed components, phased adoption, and clean technology refresh without platform lock-in.

| Layer | Primary Functions | Key Technologies | Integration Interfaces |

|---|---|---|---|

| L1: Acquisition | UAV/UGV/fixed sensor deployment, mission planning, on-board preprocessing, edge inference | UAV FCs (ArduPilot, PX4), ROS2, NVIDIA Jetson, TensorRT, MQTT | MAVLink, ROS2 topics, MQTT broker, local storage API |

| L2: Data Management | Multi-modal ingest, temporal/spatial synchronization, 3D reconstruction, quality control, labeling | Apache Kafka, COLMAP, Open3D, Metashape, MinIO/S3, Label Studio | REST API (ingest), GeoTIFF/LAS/E57 formats, active learning API |

| L3: Perception | Single-modal defect detection/segmentation, multi-modal fusion, anomaly detection, uncertainty quantification | PyTorch, ONNX, TensorRT, Hugging Face, NVIDIA Triton, MLflow | REST/gRPC inference API, GeoJSON defect schema, calibrated probability outputs |

| L4: Asset Modeling | SHM modal analysis, damage localization, digital twin updating, deterioration forecasting, what-if simulation | FEniCS/OpenSees (FEM), PyMC (Bayesian), LSTM/Transformer, ANSYS Digital Twin, IFC/CityGML | BIM/IFC API, REST (twin state), time-series DB (InfluxDB, TimescaleDB) |

| L5: Decision Support | Risk scoring & prioritization, work-order generation, regulatory report generation, AMS/CMMS integration, human-in-the-loop review | Esri ArcGIS, IBM Maximo, SAP PM, PowerBI/Tableau, LangChain (report gen), Streamlit | IBM Maximo REST API, SAP PM RFC, GeoJSON, PDF/Word report export, audit trail API |

Acquisition Layer: UAV, Rover & Fixed Sensor Platforms

The Acquisition Layer is the physical-digital interface of the AI Infrastructure Inspection system, the domain where sensor physics, platform engineering, regulatory compliance, and AI preprocessing converge. Inspection data quality is irreversibly determined at this layer; no amount of downstream algorithmic sophistication can recover information not captured at acquisition.

UAV Platforms for AI Drone Inspection and Aerial Inspection

Unmanned Aerial Vehicles (UAVs) have become the primary platform for AI Drone Inspection of bridges, power lines, towers, wind turbines, and tall structures, offering access to elements otherwise requiring costly and disruptive access scaffolding. Architecturally relevant UAV design parameters include:

- -Payload Configuration: Multi-sensor gimbals integrating RGB (≥20MP, 4K video), uncooled or cooled IR (LWIR, 8-14μm), and optional LiDAR module. Calibrated extrinsic parameters (rotation and translation between sensors) are essential for accurate multi-modal registration in the Data Management Layer.

- -Ground Sampling Distance (GSD): GSD (mm/pixel) governs minimum detectable crack width. For concrete crack detection (≥0.2mm per AASHTO) at altitudes of 5-10m, RGB sensors require ≥20MP resolution. Higher altitude surveys sacrifice GSD for coverage rate, a fundamental trade-off parameterized during mission planning.

- -RTK-GNSS and LiDAR Positioning: Real-Time Kinematic GNSS (±1-3cm horizontal accuracy) or LiDAR-Inertial Odometry (LIO) is required for geo-referencing defects in asset coordinates with sufficient precision for digital twin integration and cross-inspection change detection.

- -Regulatory Compliance: Operations in controlled airspace require Beyond Visual Line of Sight (BVLOS) waivers, Remote ID compliance (FAA Part 89, EU 2021/664), and geofencing enforcement, architectural requirements for the mission planning subsystem.

UGV Platforms for Rover Inspection

Unmanned Ground Vehicles (UGVs) equipped for Rover Inspection extend AI infrastructure monitoring to environments inaccessible or unsafe for UAVs: interior bridge deck soffits, tunnel linings, pipeline corridors, and confined industrial spaces. Key UGV capabilities include integrated RGB+IR cameras, ultrasonic thickness measurement (UT) for corrosion quantification, SLAM-based autonomous navigation, and manipulator arms for contact-based sensor deployment.

On-Board Edge Intelligence

Modern AI Drone Inspection and Rover Inspection platforms embed edge inference capabilities using NVIDIA Jetson Orin (275 TOPS), Qualcomm Cloud AI 100, or Intel Movidius neural compute sticks. On-board functions include:

- -Payload Configuration: Multi-sensor gimbals integrating RGB (≥20MP, 4K video), uncooled or cooled IR (LWIR, 8-14μm), and optional LiDAR module. Calibrated extrinsic parameters (rotation and translation between sensors) are essential for accurate multi-modal registration in the Data Management Layer.

- -Real-time component detection for obstacle avoidance and preliminary defect flagging (YOLO-class models at 30+ fps on Jetson Orin).

- -Image quality assessment (blur, exposure, coverage completeness) with adaptive mission re-planning for sub-optimal frames.

- -Efficient compression (HEVC/H.265, JPEG XL) optimized for downstream analytics fidelity rather than display quality.

- -Bandwidth-aware streaming: high-priority anomaly frames streamed immediately; routine frames batched for post-mission ingest.

Data Management Layer: Ingest, Synchronization & 3D Reconstruction

The Data Management Layer is the enterprise data platform of the AI Infrastructure Inspection system. It must ingest petabyte-scale heterogeneous sensor data from diverse acquisition platforms, enforce data quality standards, construct spatially and temporally coherent multi-modal representations, and serve inspection-ready datasets to the Perception Layer, all with full provenance tracking and audit capability.

Multi-Modal Ingest and Temporal Synchronization

Multi-sensor platforms generate data streams with heterogeneous sampling rates, data formats, and communication protocols. Precise temporal synchronization is prerequisite to accurate multi-modal fusion:

- -Hardware synchronization using PPS (pulse-per-second) signals from GNSS receivers (±1μs accuracy) for LiDAR-camera and LiDAR-IMU temporal alignment.

- -Software synchronization via message interpolation for sensors lacking hardware sync, with ROS2 bag files or Apache Kafka topics providing ordered, timestamped message streams.

- -Extrinsic calibration: rigid body transforms between sensor coordinate frames, calibrated using target-based (AprilTag, checkerboard) or targetless (LiDAR-camera hand-eye calibration) methods, stored in the asset metadata registry.

3D Reconstruction: Photogrammetry and LiDAR Fusion

Dense 3D representations enable geo-referenced defect localization, cross-inspection change detection, and digital twin initialization, functions central to enterprise AI Infrastructure Inspection programs.

- -Structure-from-Motion / Multi-View Stereo (SfM-MVS): Pipelines (COLMAP, Agisoft Metashape, RealityCapture) process overlapping RGB image sequences to generate dense point clouds, textured 3D meshes, and orthomosaics. Accuracy: ±3-10mm at 5m flight altitude with RTK control; GCP-augmented accuracy ±2-5mm.

- -LiDAR-Inertial SLAM: LIO-SAM, FAST-LIO2 algorithms fuse LiDAR point clouds with IMU data for real-time dense mapping. Accuracy: ±5-20mm without GNSS; augmented to ±2-5mm with GNSS corrections.

- -Multi-Modal Point Cloud Registration: Aligning LiDAR and photogrammetric point clouds, and registering IR and RGB image projections onto the 3D mesh, using feature-based (FPFH, SHOT descriptors) or deep learning-based (DeepICP, OverlapNet) registration, critical for coherent multi-modal analysis.

Data Quality Control and Semi-Automated Labeling

Automated quality checks enforce minimum standards for image sharpness (Laplacian variance threshold), exposure (histogram analysis), GPS accuracy (HDOP < 2.0), and multi-modal registration error (reprojection RMSE < 1.5 pixels). Substandard frames are flagged for re-acquisition, with adaptive UAV mission replanning triggered automatically.

Semi-automated labeling pipelines combine SAM-based prompt-driven segmentation for bounding box and mask generation, existing AI Inspection model pre-annotations for review-and-correct workflows, and active learning query strategies (uncertainty sampling, core-set selection) to maximize label efficiency, reducing expert annotation effort by 60-80% vs. manual annotation.

Perception Layer: Single- and Multi-Modal Deep Learning

The Perception Layer is the core AI engine of the infrastructure inspection platform, the domain where sensor data is transformed into structured, quantified, geo-referenced defect intelligence. It encompasses single-modality AI models, multi-modal fusion architectures, and uncertainty quantification mechanisms essential for human-in-the-loop decision support.

Single-Modality Perception Architectures

Production-grade single-modality models for AI Infrastructure Inspection employ the following architectural patterns:

| Task | Architecture | Representative Models | Benchmark Performance |

|---|---|---|---|

| Crack / Spalling Detection & Segmentation | Encoder-decoder with skip connections; attention gates for fine-grained crack localization | U-Net, Swin-UNet, TransUNet, DeepLabv3+ with ResNet-50/101 backbone | IoU 0.78-0.92 (DeepCrack, CrackForest datasets); F1 0.82-0.90 |

| Component Detection (UAV AI Drone Inspection) | Single-stage anchor-free detection for real-time edge inference; two-stage for high-accuracy ground processing | YOLOv8/YOLOv9, RT-DETR, Faster R-CNN, DINO | mAP@0.5: 0.85-0.95 (insulator, tower, bolt detection); 30-90 fps on Jetson Orin |

| Corrosion & Surface Anomaly Classification | CNN feature extraction + classification head; few-shot learning for rare corrosion types | EfficientNet-B4/B7, Vision Transformer (ViT-B/16), DINOv2 + linear probe | Accuracy 88-95%; AUC 0.93-0.98 (MCIS, SSCD datasets) |

| Anomaly Detection (unsupervised) | Normalizing flows, autoencoders, patch-based self-supervised methods for anomaly scoring without defect labels | PatchCore, CFA, FastFlow, PaDiM | AUROC 0.92-0.98 on MVTec-AD; transferable to structural texture anomalies |

Multi-Modal Fusion Strategies

Multi-modal fusion extracts synergistic information from complementary sensing modalities, for example, RGB imagery for surface crack morphology combined with IR thermography for sub-surface delamination, or visual inspection data fused with vibration-based modal analysis for comprehensive structural assessment. Three principal fusion paradigms are employed:

- -Early Fusion (Input-Level): Raw sensor data or low-level features from multiple modalities are concatenated as additional input channels before the primary feature extractor. Effective when modalities are spatially registered and temporally synchronous. Example: 4-channel RGB+IR tensor as input to a modified U-Net, achieving +8-12% IoU improvement over RGB-only for bridge deck delamination detection.

- -Mid-Level Fusion (Feature-Level): Modality-specific encoders extract independent feature representations, which are combined via cross-modal attention (Transformer cross-attention), gating mechanisms (Multi-modal Dynamic Fusion), or graph neural networks (multi-modal scene graphs). This approach handles asynchronous, spatially misaligned, or higher-dimensional modality combinations (e.g., RGB image + LiDAR point cloud + vibration time series) and is the recommended approach for heterogeneous infrastructure sensing stacks.

- -Late Fusion (Decision-Level): Independent model outputs (class probabilities, bounding boxes, condition scores) from each modality are aggregated using learned weights, Bayesian model averaging, or Dempster-Shafer evidence theory. Easiest to implement and most robust to individual modality failures (sensor dropout, calibration errors), important resilience properties for field inspection systems.

Recent research demonstrates that fusing visual imagery with structured inspection reports (textual data) using cross-modal transformers improves bridge element condition rating concordance with certified inspectors by 15-20%, suggesting that language-grounded multi-modal AI is a significant emerging capability for AI in Infrastructure applications.

Uncertainty Quantification for Human-in-the-Loop Workflows

High-stakes infrastructure decisions require not just point predictions but calibrated uncertainty estimates that enable intelligent human review prioritization. Production-grade uncertainty methods include:

- -Monte Carlo Dropout (MC-Dropout): Approximate Bayesian inference via stochastic forward passes (T=20-50 samples); practical for deployment without architecture changes; predictive uncertainty = mean and variance over samples.

- -Deep Ensembles: 3-10 independently trained models; gold standard for calibration; computationally expensive but parallelizable across inference nodes.

- -Evidential Deep Learning: Predicts higher-order Dirichlet distributions over class probabilities, distinguishing aleatoric (data noise) from epistemic (model knowledge) uncertainty, enabling adaptive data collection targeted at epistemically uncertain regions.

- -Conformal Prediction: Distribution-free coverage guarantees (coverage ≥ 1-α) for prediction sets without distributional assumptions, increasingly adopted for certified AI systems in regulated infrastructure domains.

Structural & Asset Modeling Layer: SHM and Digital Twins

The Structural and Asset Modeling Layer elevates AI Infrastructure Inspection from defect cataloguing to structural prognosis, integrating perception outputs with physics-based structural models, sensor time series, and engineering knowledge to produce actionable health intelligence and failure risk forecasts. This is the layer where AI in Infrastructure transitions from reactive to predictive asset management.

Data-Driven Structural Health Monitoring (SHM)

Operational modal analysis (OMA), extracting natural frequencies, mode shapes, and damping ratios from ambient vibration response under operational loading, provides a sensitive, non-destructive indicator of structural change. AI-enhanced SHM workflows include:

- -Automated OMA using SSI-COV (Stochastic Subspace Identification-Covariance Driven) and PolyMax algorithms, with AI-assisted stabilization diagram analysis replacing manual expert pole selection.

- -Damage-sensitive feature extraction: statistical features (kurtosis, RMS, crest factor), wavelet packet energy coefficients, and autoregressive model residuals from vibration signals serve as inputs to damage classification networks.

- -Long Short-Term Memory (LSTM), Temporal Convolutional Networks (TCN), and Transformer-based sequence models for modeling structural response under variable environmental and loading conditions, enabling separation of damage-induced changes from temperature, humidity, and traffic effects.

Digital Twins for Infrastructure Asset Management

A physics-informed Digital Twin is the central integration artifact of the Asset Modeling Layer, a continuously updated virtual replica combining geometric fidelity (from 3D reconstruction), material and mechanical properties (from design documents and non-destructive evaluation), physics-based simulation (Finite Element Analysis), and live sensor data assimilation.

- -Model Updating (System Identification): Bayesian updating algorithms (Markov Chain Monte Carlo, Sequential Monte Carlo / Particle Filters, Kalman filtering) align FE model parameters (Young's modulus, boundary conditions, section properties) with measured structural responses. AI surrogates (Gaussian Processes, neural network emulators) replace computationally expensive FE solves for real-time updating.

- -Predictive Simulation (What-If Analysis): The calibrated twin enables rapid assessment of hypothetical scenarios: extreme load events (ship collision, seismic excitation, 100-year flood), retrofit and strengthening interventions, or maintenance deferral, supporting risk-informed investment decisions without physical testing.

- -Lifecycle State Management: The twin maintains a temporal record of structural condition, integrating inspection findings, maintenance interventions, detected damage, and environmental exposure history, enabling longitudinal analysis of deterioration trajectories and predictive maintenance scheduling.

Decision Support Layer: Risk Intelligence & Enterprise Integration

The Decision Support Layer is where AI Infrastructure Inspection generates direct business value, translating perception and modeling outputs into actionable risk intelligence, automated work-order generation, regulatory reporting, and seamless integration with enterprise asset management platforms. This is the layer that justifies the investment for the techno-commercial executive.

Multi-Factor Risk Scoring and Asset Prioritization

Risk scoring in infrastructure asset management integrates three principal factors per the ISO 31000 risk management framework:

| Risk Factor | AI-Derived Inputs | Computation Method |

|---|---|---|

| Probability of Failure (PoF) | Defect severity and extent (Perception Layer), deterioration rate (SHM/prognostics), remaining useful life estimate | Bayesian reliability model integrating structural fragility curves, deterioration model uncertainty, and inspection evidence; output: P(failure | current state) per limit state |

| Consequence of Failure (CoF) | Asset criticality (redundancy, traffic volume, load path), failure mode consequence (structural collapse, service disruption, environmental release), downstream asset dependencies | Weighted multi-attribute CoF matrix per asset class standards; consequence categories: safety, economic, environmental, reputational |

| Risk Priority Score | Combined PoF × CoF, normalized to asset portfolio; adjusted for inspection confidence (uncertainty quantification outputs) | Risk matrix classification (High/Medium/Low) per owner-operator risk tolerance framework; ranked work queue output to AMS/CMMS |

Human-in-the-Loop Review and Active Learning

Engineering accountability and regulatory requirements mandate that AI recommendations are reviewed by qualified inspectors before triggering maintenance actions. The human-in-the-loop interface provides:

- -Interactive defect visualization: geo-referenced defect instances overlaid on 2D orthomosaics and 3D textured meshes, with inspection history, comparison to previous campaigns (change detection), and uncertainty confidence overlays.

- -Accept / Correct / Reject workflow: inspector decisions are logged to the audit trail and fed back to the active learning engine as high-value training signal, the primary mechanism for continuous model improvement in deployment.

- -Explanation and evidence: saliency maps (Grad-CAM, SHAP attributions), similar defect retrievals from historical database, and the specific sensor evidence supporting each AI finding, building inspector trust and enabling informed acceptance/rejection.

Enterprise System Integration

The commercial value of AI Infrastructure Inspection is fully realized only when findings are integrated into operational workflows. Production integration patterns include:

- -IBM Maximo Application Suite: Bi-directional REST API integration: AI-detected defects automatically generate Maximo work orders with asset ID, GPS location, defect severity, and recommended intervention; Maximo maintenance completion events trigger digital twin state updates.

- -SAP Plant Maintenance (PM): RFC/BAPI and OData integration for automated PM notification creation, preventive maintenance plan optimization based on AI risk scores, and maintenance cost tracking against AI-predicted intervention timing.

- -GIS Integration (Esri ArcGIS, QGIS): GeoJSON and Feature Service publication of geo-referenced inspection findings, enabling spatial analysis, corridor risk mapping, and regulatory GIS reporting.

- -Regulatory Report Generation: LLM-assisted (GPT-4/Claude API) automated generation of inspection reports in FHWA, AASHTO, IEC, and API standard formats from structured AI findings, reducing report preparation time by 70-85%.

Pretraining, Domain Adaptation & Parameter-Efficient Tuning

Infrastructure inspection AI operates in a persistent data scarcity regime: defects are rare events, severe damage instances are inherently few, and annotation of pixel-wise segmentation masks is expensive (10-30 minutes per image for expert annotators). Effective mitigation of this challenge is architecturally essential, not optional.

Foundation Model Pretraining and Self-Supervised Learning

Self-supervised pre-training on large unlabeled inspection image corpora (using MAE, Masked Autoencoder, MoCo-v3, or DINO contrastive learning objectives) learns robust, transferable visual representations without annotation cost. Foundation models (SAM, CLIP, DINOv2) pre-trained on internet-scale image corpora provide strong initialization for infrastructure inspection fine-tuning, with the key advantage that structural texture, material, and geometric features generalize significantly better than object recognition features from ImageNet alone.

Parameter-Efficient Fine-Tuning (PEFT) for Edge Deployment

Full fine-tuning of large vision models (ViT-L/16, Swin-L: 200-300M parameters) is computationally prohibitive for edge UAV inference. PEFT methods enable effective domain adaptation with minimal trainable parameters:

- -Low-Rank Adaptation (LoRA): Injects trainable rank-decomposed matrices into attention weight matrices; adapts 0.1-1% of parameters while matching full fine-tune performance; enables Swin-Transformer adaptation for crack segmentation on 2,000 labeled samples.

- -Visual Prompt Tuning (VPT): Prepends learnable prompt tokens to the input sequence of a frozen ViT encoder; requires only 1,000-5,000 parameters per task; highly effective for defect classification transfer across asset classes.

- -Adapter Layers: Lightweight trainable bottleneck modules inserted between frozen transformer layers; enables rapid multi-task adaptation (bridge → tunnel → pipeline inspection) by swapping adapter weights without full model reload.

Synthetic Data Augmentation

Generative models (Stable Diffusion fine-tuned on inspection data, ControlNet-guided generation from structural CAD/depth maps) can synthesize photorealistic inspection images with controlled defect type, severity, lighting, and surface condition. Synthetic augmentation has demonstrated +5-15% IoU improvement for rare defect classes (hairline cracks, early-stage corrosion pitting) where real annotated data is insufficient. Domain randomization, randomizing lighting, texture, viewpoint, and environmental conditions during training, improves model robustness to the appearance variability inherent in real-world inspection campaigns.

Evaluation Framework: Technical & Operational Metrics

Rigorous, multi-dimensional evaluation is the cornerstone of trustworthy AI Infrastructure Inspection. The evaluation framework must span technical model performance, operational efficiency, and business outcome metrics, recognizing that a model with outstanding mAP that fails to reduce inspection cost or improve defect detection rates versus manual inspection has delivered no business value.

| Metric Category | Specific Metrics | Target / Benchmark | Measurement Method |

|---|---|---|---|

| Detection Accuracy | mAP@0.5, mAP@0.5:0.95 (object detection), IoU, Dice, BoundF (segmentation), Accuracy, AUC (classification) | mAP > 0.85; IoU > 0.75; AUC > 0.92 | COCO evaluation protocol; hold-out test set with expert annotation |

| Defect Quantification Accuracy | Crack width RMSE (mm); delamination area error (%); corrosion extent RMSE | Crack width RMSE < 0.3mm; area error < 15% | Comparison vs. physical contact measurement (crack gauge, hammer sounding) |

| Calibration & Uncertainty Quality | Expected Calibration Error (ECE); reliability diagram; Brier Score; conformal coverage rate | ECE < 0.05; conformal coverage ≥ 1-α (e.g., 95%) | Held-out calibration set; conformal prediction evaluation protocol |

| SHM / Prognostic Accuracy | Natural frequency identification RMSE (Hz); damage localization accuracy (%); RUL prediction RMSE (years); failure probability Brier Score | Freq. RMSE < 0.05 Hz; damage localization > 85%; RUL RMSE < 1 year | Controlled damage experiments; long-term monitoring datasets (SHM benchmark datasets) |

| Operational Efficiency | Inspection time reduction (%); coverage rate improvement (km²/hr vs. manual); defect detection rate vs. manual inspection; false positive rate (re-inspection trigger rate) | > 40% time reduction; 3x coverage; < 10% false positive rate | Controlled field trials with parallel manual inspection; A/B deployment comparison |

| Business & Safety Outcomes | Unplanned downtime reduction (%); maintenance cost reduction (%); inspection cost per km/asset; near-miss and incident rate reduction | 30-50% downtime reduction; 25-35% cost reduction | Longitudinal tracking vs. pre-AI baseline; industry benchmark comparison |

Robustness, Safety & Responsible AI Governance

Infrastructure AI systems operate in high-consequence environments where model failures can delay critical maintenance interventions or, in the opposite failure mode, trigger unnecessary costly interventions. Responsible deployment requires systematic governance across five dimensions:

- -Distribution Shift Robustness: Production inspection data differs from training data in lighting conditions, weather (fog, rain, snow), surface weathering, camera changes, and seasonal vegetation. Mitigation: domain randomization during training, test-time augmentation, distributional shift detection (Maximum Mean Discrepancy, ODIN outlier detection), and automated model performance monitoring with retraining triggers.

- -Over-Trust and Automation Bias Mitigation: The primary human factors risk in AI Infrastructure Inspection is inspector over-reliance on AI recommendations, accepting AI findings without adequate critical review. Interface design must surface uncertainty prominently, require inspector confirmation for high-consequence decisions, and include adversarial testing (deliberately inserting borderline cases) in inspector training programs.

- -Data Privacy and Security: Infrastructure inspection data contains sensitive asset geometry, security-relevant structural details, and location data for critical national infrastructure. Architectural requirements: end-to-end encryption (TLS 1.3 in transit, AES-256 at rest), role-based access control (RBAC), data residency enforcement (on-premises or sovereign cloud deployment for critical assets), and model adversarial robustness testing against data poisoning and evasion attacks.

- -Algorithmic Fairness and Geographic Equity: AI inspection model performance must not systematically underperform for asset populations underrepresented in training data, particularly relevant for older structures using historical construction practices, assets in developing-region geographies, or non-standard materials. Mitigation: stratified performance evaluation by asset type, age, geography, and material; targeted data collection for underrepresented populations; and performance equity SLOs in model deployment criteria.

- -Governance Framework and Audit Trail: Regulatory-compliant AI governance requires: (a) complete audit trail of every AI finding, inspector action, and model version in production; (b) documented model cards (training data, known limitations, performance bounds) per NIST AI RMF and EU AI Act requirements; (c) explainability requirements for high-risk AI decisions (IEC 62443 for industrial, ASCE ethics code for civil engineering applications); (d) periodic external model audits and red-team adversarial testing.

Deployment Architecture: Edge, Cloud & Hybrid Patterns

The AI Infrastructure Inspection platform must operate across a deployment continuum from resource-constrained UAV edge nodes to high-performance cloud clusters, with the architectural discipline to allocate compute where it delivers maximum value within latency, bandwidth, and cost constraints.

Edge-Centric Deployment (Tier 1: On-Board)

- -Platform: NVIDIA Jetson Orin NX (100 TOPS) or AGX Orin (275 TOPS); Qualcomm Cloud AI 100 Ultra for ultra-low-power requirements.

- -Workloads: Real-time component detection for collision avoidance (YOLOv8n, TensorRT INT8, 60+ fps); image quality assessment; preliminary crack flagging; adaptive mission replanning; bandwidth-aware streaming prioritization.

- -Model Optimization: Post-training quantization (INT8/FP16), structured pruning (50-70% parameter reduction), knowledge distillation from full teacher model; ONNX export + TensorRT optimization pipeline; latency target < 33ms for 30 fps real-time inference.

Fog/Gateway Deployment (Tier 2: Site Server)

- -Platform: NVIDIA EGX Industrial server, ruggedized industrial PC, or 5G Mobile Edge Computing (MEC) node at inspection site.

- -Workloads: Full-resolution defect segmentation (U-Net, Swin-UNet); multi-modal fusion for RGB+IR+LiDAR; incremental 3D reconstruction; local data buffering and quality control; preliminary risk scoring; inspector review interface (disconnected operation).

Cloud/On-Premises Central Cluster (Tier 3)

- -Platform: IBM Cloud (VPC with NVIDIA A100/H100 GPU instances); IBM Cloud Pak for Data on OpenShift for on-premises deployments with strict data residency; hybrid cloud via IBM Cloud Satellite.

- -Workloads: Full pipeline training and retraining (model updates, active learning); centralized full-resolution 3D reconstruction and orthomosaic generation; digital twin simulation and updating; portfolio-level risk analytics; AMS/CMMS integration; regulatory report generation; MLOps (MLflow, Kubeflow) for model versioning, A/B testing, and automated retraining..

Case Studies: Bridges, Power Lines & Industrial Plants

Case Study: Bridge Deck AI Drone Inspection with Delamination Detection

- -Business Objective: Automate FHWA element-level condition rating for highway bridge deck and superstructure, replacing 3-day manual inspection requiring full lane closure with 4-hour UAV AI Drone Inspection campaign requiring only shoulder closure.

- -Sensing Platform: DJI Matrice 350 RTK with Zenmuse P1 (45MP RGB, GSD 3mm at 5m) + FLIR Boson 640 IR (640×512, 7.5-13.5μm LWIR) + RTK-GNSS for geo-referencing.

- -AI Pipeline: RGB input → SfM-MVS 3D reconstruction (Metashape) → crack/spalling/corrosion detection and segmentation (Swin-UNet) → IR delamination detection (threshold + anomaly segmentation) → RGB+IR multi-modal fusion for delamination confidence boosting → geo-referenced defect GeoJSON → FHWA element rating computation → work order generation (IBM Maximo API).

- -Demonstrated Results: IoU 0.83 for crack segmentation (0.2mm minimum width at 5m GSD); 91% concordance with certified bridge inspector element ratings (Cohen's Kappa 0.87); 65% inspection time reduction; 4× defect documentation completeness vs. manual.

Case Study: Power Line AI Drone Inspection with Thermal Hotspot and Vegetation Risk Detection

- -Business Objective: Detect insulator defects, conductor hotspots, and vegetation encroachment risk along 200km transmission corridor using AI Drone Inspection, replacing helicopter-based inspection at 10× lower cost-per-km.

- -Sensing Platform: Fixed-wing VTOL UAV (WingtraOne GEN II) with Sony RX1R II (42MP RGB) + FLIR Vue Pro R 640 (calibrated IR) + Riegl miniVUX-1DL LiDAR for vegetation clearance measurement.

- -AI Pipeline: RGB+IR orthomosaic → tower and component detection (YOLOv8l) → insulator string defect classification (EfficientNet-B5) → IR hotspot detection (anomaly detection: threshold + PatchCore) → LiDAR point cloud → vegetation height model → NERC FAC-003 clearance analysis → risk-scored asset register output.

- -Demonstrated Results: mAP@0.5 0.94 for tower/insulator detection; 97% hotspot detection rate (vs. 89% helicopter IR inspection); vegetation encroachment risk classification accuracy 88%; inspection cost reduction 78% vs. helicopter; full 200km corridor inspected in 3 days vs. 10 days helicopter.

Case Study: Industrial Plant Integrated Visual + SHM Inspection

- -Business Objective: Continuous structural health monitoring of petrochemical plant pipe racks and pressure vessels, integrated with periodic robotic visual inspection (Rover Inspection), to enable risk-based inspection (RBI per API 580) and predictive maintenance scheduling, targeting 40% reduction in unplanned shutdown events.

- -Sensing Platform: Fixed IoT sensor network: 120 accelerometers (MEMS), 48 fiber Bragg grating strain sensors, distributed temperature sensing (DTS). Periodic inspection: Boston Dynamics Spot UGV with Rover Inspection payload (RGB+IR cameras, UT thickness probe).

- -AI Pipeline: Continuous vibration time series → LSTM-based anomaly detection → Bayesian damage localization → FE digital twin update (surrogate model) → Spot visual inspection (crack, corrosion segmentation) → UT thickness data → integrated condition assessment → API 580 RBI risk matrix computation → SAP PM work order generation.

- -Demonstrated Results: 3 incipient fatigue crack events detected 6-8 weeks before scheduled manual inspection; 42% reduction in unplanned shutdown events (12-month deployment); 31% reduction in total inspection cost; full integration with SAP PM maintenance execution workflow.

Open Research Problems & Future Directions

Despite the substantial technical progress documented in this paper, several open challenges define the research and engineering frontier of AI Infrastructure Inspection:

- -Standardized Benchmarks and Evaluation Protocols: The field lacks universally adopted benchmark datasets covering diverse asset types, defect classes, climatic conditions, and sensing modalities with consistent annotation protocols. Initiatives analogous to COCO (vision) or GLUE (NLP) are urgently needed for infrastructure inspection AI, enabling reproducible, cross-method comparison and accelerating capability development.

- -Self-Calibrating Digital Twins: Current digital twins require periodic manual recalibration as structural properties evolve (concrete creep, steel fatigue, material aging). Self-calibrating twins that continuously learn from new sensor data, environmental exposures, and inspection findings while maintaining physical law consistency (thermodynamic feasibility, equilibrium constraints) remain an open research problem.

- -Physics-Informed and Causal AI: Purely data-driven inspection models extrapolate poorly to loading, environmental, or damage scenarios not represented in training data, a critical limitation for rare extreme events (earthquakes, vessel collisions). Physics-Informed Neural Networks (PINNs), neural operators (DeepONet, FNO), and causal AI architectures that incorporate structural mechanics knowledge as inductive bias are active research frontiers with high practical impact.

- -Federated Learning for Cross-Operator Intelligence: Individual infrastructure operators possess insufficient data to train robust, generalizable inspection AI. Federated learning architectures enabling cross-operator model training without sharing raw inspection data (protecting sensitive asset geometry and location) would dramatically expand available training signal while preserving data sovereignty and commercial confidentiality.

- -Autonomous Adaptive Inspection Missions: Current UAV inspection missions are pre-planned with fixed coverage patterns. Adaptive inspection systems that dynamically re-plan coverage in response to on-board defect detections, concentrating data acquisition resources on high-interest areas, remain an open research problem requiring tight integration of perception, planning, and platform control.

- -Human Factors, Trust Calibration, and XAI: The human-AI collaboration dynamics in inspection workflows, how certified inspectors form, update, and miscalibrate trust in AI recommendations, require systematic human factors research. Explainable AI (XAI) for infrastructure inspection must communicate uncertainty, evidence, and limitations in formats accessible to non-ML experts (civil engineers, regulators), not merely ML practitioners.

Conclusion & Practitioner Roadmap

This white paper has presented a comprehensive Modular AI Architecture for Multi-Modal Infrastructure Inspection, a five-layer enterprise framework transforming infrastructure asset management from periodic, reactive, labor-intensive inspection into continuous, predictive, and AI-augmented structural intelligence. The convergence of heterogeneous sensing platforms, production-grade deep learning, and physics-informed digital twins now makes enterprise-scale AI Infrastructure Inspection technically mature and commercially compelling.

For the techno-commercial leader, the practical path to value realization follows a phased adoption roadmap:

| Phase | Objective | Key Actions | Success Metrics |

|---|---|---|---|

| Phase 1 (0-6 months): Foundation | Establish modular data and perception stack for highest-priority asset class | Deploy UAV/sensor platform for pilot asset class; establish Data Management Layer (ingest, 3D recon, QA); deploy initial single-modality Perception models; integrate with one AMS/CMMS | Data pipeline operational; first AI-assisted inspection report delivered; baseline inspection time and cost established |

| Phase 2 (6-18 months): Scale & Fuse | Multi-modal fusion and digital twin pilot for high-value assets; expand to 2-3 asset classes | Deploy multi-modal fusion models (RGB+IR+LiDAR); initialize digital twin for 3-5 critical assets; implement human-in-the-loop review workflow; establish active learning retraining pipeline | Multi-modal defect detection rate +15% vs. single-modal; digital twin model-to-measurement correlation > 90%; inspector review workflow adoption > 80% |

| Phase 3 (18-36 months): Enterprise & Govern | Portfolio-scale deployment, predictive maintenance integration; full governance framework | Scale to full asset portfolio; implement portfolio risk scoring and prioritization; integrate prognostics with maintenance budgeting; deploy responsible AI governance framework; achieve regulatory acceptance for AI-assisted inspection reports | 30-50% reduction in unplanned downtime; 25-35% inspection cost reduction; regulatory authority acceptance of AI inspection reports; full MLOps/governance operational |

Technical sophistication must be paired with rigorous attention to robustness, safety, regulatory compliance, and human-centered design. AI does not replace the qualified inspector or the structural engineer, it radically augments their capability, extending their reach, improving their consistency, and providing them with richer, more timely intelligence for engineering judgment.

Organizations that architect for modularity, invest in data infrastructure, govern responsibly, and maintain human engineering judgment at the center of decision-making will build not merely a technology capability but a sustainable competitive advantage in infrastructure asset management, delivering safer infrastructure, more efficient capital allocation, and measurably improved societal resilience.