What Is AI Visual Inspection in Manufacturing?

AI visual inspection in manufacturing uses computer vision and deep learning to automatically detect, classify, and locate defects in products as they move through the production lineat line speed, with consistent accuracy, every shift. Cameras capture images; AI models trained on defect examples analyse each image in under 100 milliseconds; defects are flagged, classified, and logged with full traceability. Tritva by Ombrulla is a purpose-built AI visual inspection platform that delivers this capability across single stations, multi-line factories, and multi-site enterprise deployments.

- - 99%+ defect detection accuracyAcross surface, assembly, cosmetic, and material defect classes

- - Sub-100ms inferenceInspects at full line speed without throughput impact

- - Consistent quality across all shiftsNo inspector fatigue, no shift-to-shift variation

- - Full traceabilityEvery part, every decision, every image timestamped and logged

- - Native integration with Petran APMDefect patterns feed predictive maintenance models automatically

Most quality managers face the same problem. First shift runs clean. Second shift starts, and suddenly customer complaints arrive about defects that should have been caught on the line. The inspector was tired. The lighting changed. Standards blurred between operators over a 12-hour period.

Manual inspection fails predictably. When you are checking hundreds of parts per hour across multiple shifts, human vision degradesnot because your inspectors are poor at their jobs, but because sustained visual attention at production speed is physiologically impossible to maintain consistently. The consequence is defect escapes, warranty returns, scrap, and the kind of customer PPM conversations that consume engineering time for weeks.

AI visual inspection solves this by removing variability from the inspection decision entirely. A camera captures every part. An AI model trained on your specific defect types analyses each image in milliseconds. Defects are flagged, classified, and logged with the same criteria applied to every part, every shift, every weekregardless of time of day, operator, or production speed.

This guide covers how AI visual inspection works in real manufacturing environments, what it costs, how to build training datasets that produce reliable models, how to deploy successfully across industries, and how to choose the right platform. If you are dealing with defect escapes, high scrap rates, or inconsistent quality across shiftsthis is what actually works on factory floors in 2026.

What Is AI Visual Inspection and How Does It Work?

AI visual inspection uses computer vision and deep learning to automatically detect, classify, and locate defects in manufactured products as they move through the production line. The core workflow is: a camera captures images as parts pass the inspection station; an AI model analyses each image in under 100 milliseconds; defects are identified, classified by type, located with a bounding box, and scored by severity; conforming parts continue down the line; non-conforming parts are flagged for rejection or operator review. Every decision is logged with the image, timestamp, defect category, severity score, and disposition outcomecreating a complete and auditable quality record.

The technology is not magic. It is pattern recognition at scale: ML models trained on labelled examples of good parts and defective parts learn to distinguish the visual signatures of specific defect types from the normal variation that exists within an acceptable product range. The sophistication lies in handling that variationreal production parts are not identical, material properties shift within specification limits, process conditions driftand a model that cannot handle this variation generates false rejects that create their own cost and operational burden.

Key Technologies Inside AI Visual Inspection

- - Computer visionExtracts visual features from imagesedges, textures, colours, shapes, gradientscreating a numerical representation that machine learning models can analyse.

- - Deep learning (CNN)Convolutional Neural Networks learn hierarchical patterns from labelled training examples. Unlike rule-based systems, CNNs recognise complex, subtle, and variable defect signatures without explicit programming.

- - Edge computingAI inference runs on dedicated compute hardware at the inspection station, processing images in real time without network round-trips. Sub-100ms decisions require local processing; cloud connectivity is used for analytics and model management, not for line-speed decisions.

- - Transfer learningTritva models start from pre-trained foundations and are fine-tuned on your specific defects and materials, dramatically reducing the volume of labelled training images required compared to training from scratch.

AI Visual Inspection vs Traditional AOI: The Critical Differences

Traditional Automated Optical Inspection (AOI) works on rule-based logic: you program explicit thresholds'reject if scratch length exceeds 2mm'and the system flags anything outside those rules. This approach works for simple, well-defined defects in stable production conditions. It fails when conditions change: when a new material batch shifts colour slightly, when a new SKU has different surface texture, when a subtle cosmetic defect cannot be defined with a simple geometric rule.

AI visual inspection learns from examples rather than rules. The comparison table below shows the structural differences that determine which approach is appropriate for your application.

| Dimension | Traditional AOI (Rule-Based) | AI Visual Inspection (Tritva) |

|---|---|---|

| Detection logic | Rule-based thresholds (e.g. 'reject if scratch >2mm') | Pattern-based: learns from labelled defect examples |

| Handles variability | Strugglesrules break when material, colour, or SKU changes | Adaptsmodels account for normal production variation |

| Complex defects | Hard to define rules for subtle cosmetic or shape defects | Classifies subtle, multi-class, and novel defects reliably |

| Setup and change | Requires engineer time to reprogram rules per variant | Retrain model with new examplesno rule reprogramming |

| False reject rate | Higherrigid thresholds trigger on normal variation | Lowerdistinguishes genuine defects from acceptable variation |

| Data output | Pass/fail signal only | Image, defect class, severity, location, confidence score |

| Continuous improvement | Manual rule updatesslow and resource-intensive | Model retrained on new production datasystematic improvement |

When to Use AI vs Traditional AOI

Traditional AOI works for: simple, geometrically definable defects; stable single-SKU production with controlled, fixed lighting; applications where defect criteria never change. AI visual inspection is superior for: complex or subtle defects; variable materials, colours, or surface textures; multi-SKU lines; cosmetic or subjective quality criteria; any application where rule-programming has failed to reach acceptable accuracy.

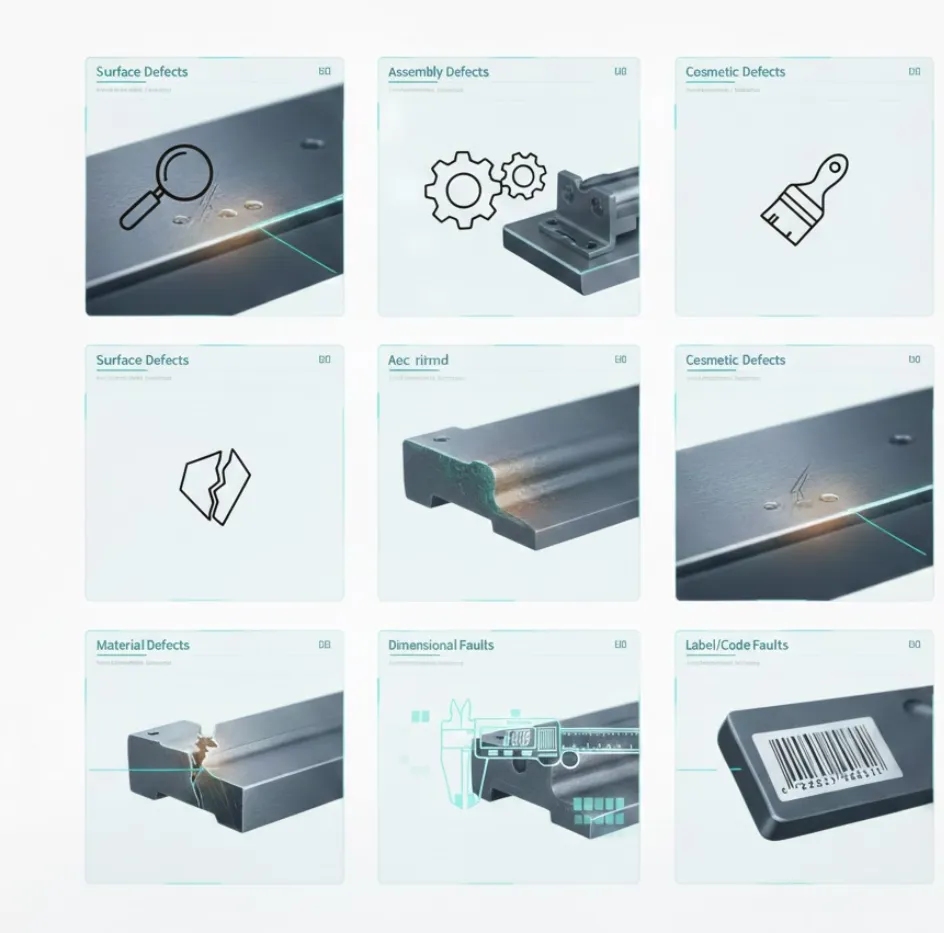

Manufacturing Defect Types AI Visual Inspection Can Detect

[AI defect detection](/solutions/ai-visual-inspection) handles both obvious and subtle defectsbut precise scoping of what you are detecting is the first step in deployment. The defect classification you define before training determines the model architecture, the training data you need to collect, and the imaging configuration required to capture those defects reliably.

Defect Types Detectable by AI Visual Inspection

| Defect Category | Examples | Imaging Approach | Typical Industry |

|---|---|---|---|

| Surface defects | Scratches, dents, corrosion, pits, orange peel, dust contamination | Directional / raking lighting to exaggerate texture contrast | Automotive body, metal stamping, painted parts |

| Assembly defects | Missing fasteners, wrong parts, incorrect placement, harness routing | Structured lighting + reference image comparison | Automotive assembly, electronics, white goods |

| Cosmetic defects | Colour variation, uneven coating, finish inconsistency, print quality | Diffuse lighting for even illumination across full surface | Consumer goods, packaging, branded components |

| Material defects | Cracks, porosity, voids, inclusions, subsurface anomalies | Backlighting (translucent), thermal, or X-ray for subsurface | Castings, forgings, welds, plastic mouldings |

| Dimensional faults | Out-of-spec geometry, warping, mismatch between mating faces | Calibrated stereo vision or structured light 3D | Precision machined parts, injection mouldings |

| Label / code faults | Missing label, incorrect batch code, barcode unreadable, wrong serial | High-resolution camera with OCR / barcode decoder | Pharma packaging, electronics serialisation |

Why Manufacturing Defect Detection Is Technically Harder Than General Computer Vision

Defect detection in manufacturing is not the same problem as object classificationidentifying 'cat or dog' from an image. You are detecting deviations from acceptable variation within a population of parts that are similar but not identical. Material properties vary batch to batch. Process conditions drift within specification limits. Surface texture differs between suppliers. Your AI model must reliably distinguish genuine defects from normal, acceptable variationand must do so at line speed, across shifts, as conditions change over months.

This is why training data quality and representative coverage matters more than model architecture. A model trained on 500 defect images from one material batch and one lighting condition will underperform when material batch, shift lighting, or process conditions change. Training data must represent the full range of acceptable variation as well as defects at different severity levels, orientations, and surface conditions.

Tritva Vision's annotation and training interface allows quality engineersnot data scientiststo continuously add new production images, retrain models, and deploy updates as production conditions evolve.

Tritva by Ombrulla: The Purpose-Built AI Visual Inspection Platform

Most [AI visual inspection](/solutions/ai-visual-inspection) implementations struggle not because the AI technology is insufficient, but because the deployment infrastructure is not built for the realities of production environments: models degrade as conditions change and no one has a clear process to retrain them; inspection data lives in siloed station reports rather than a unified quality dataset; scaling from one station to ten requires ten separate integrations and ten separate support contracts.

Tritva VisionTrain Without Data Scientists

Tritva Vision is Ombrulla's model training interface designed for quality engineers, not ML specialists. Engineers annotate defect images directly in the platform, initiate training, monitor model performance, and deploy updateswithout writing code or engaging a data science team. This reduces the time from 'we have defect images' to 'the model is live on the line' from months to weeks.

Universal Capture Source Support

Tritva ingests images from fixed line cameras, handheld devices, drones, and roverssupporting both inline production inspection and offline or infrastructure inspection workflows in a single platform. No separate systems for different capture methods.

Edge-First Architecture

AI inference runs on edge hardware at the inspection stationsub-100ms decisions with no cloud dependency for the reject/accept decision. Cloud connectivity is used for model management, analytics, and enterprise reporting, not for latency-sensitive production decisions.

Petran APM IntegrationClosed-Loop Quality-Maintenance

Defect pattern data from Tritva feeds Petran's predictive maintenance models automatically. A spike in surface scratch frequency on a stamping line triggers Petran to correlate with press vibration data and schedule proactive bearing maintenanceresolving the quality and reliability problem from a single root cause rather than treating them as two separate events.

Audit-Ready Compliance Logging

Every inspection decisionimage, timestamp, defect class, severity, dispositionis automatically logged and stored. Tritva generates audit-ready traceability records for ISO 9001, IATF 16949, FDA 21 CFR Part 11, and API requirements without additional administrative overhead.

Multi-Site Enterprise Deployment

A single Tritva instance manages inspection models , performance dashboards, and compliance records across all production lines and facilities. Rollout of model updates or new defect classes propagates from a central consoleno manual site-by-site configuration.

Proven Tritva Results

- - Defect escape rate: 0.8% → 0.06% (automotive tier 1 stamping plant, 6 months post-deployment)

- - 60% reduction in customer complaintsautomotive paint inspection deployment

- - 75% cut in field failures from assembly errorsautomotive assembly verification

- - 28 FTE quality inspectors redeployed to root cause and process engineering roles

- - FDA audit preparation: 3 weeks → 4 dayspharmaceutical batch traceability

- - Eliminated 100% inspection sampling gapsmetal stamping operation catching only 10% of defects under previous approach

See how Tritva catches what your inspectors missrequest a demo with your actual production parts

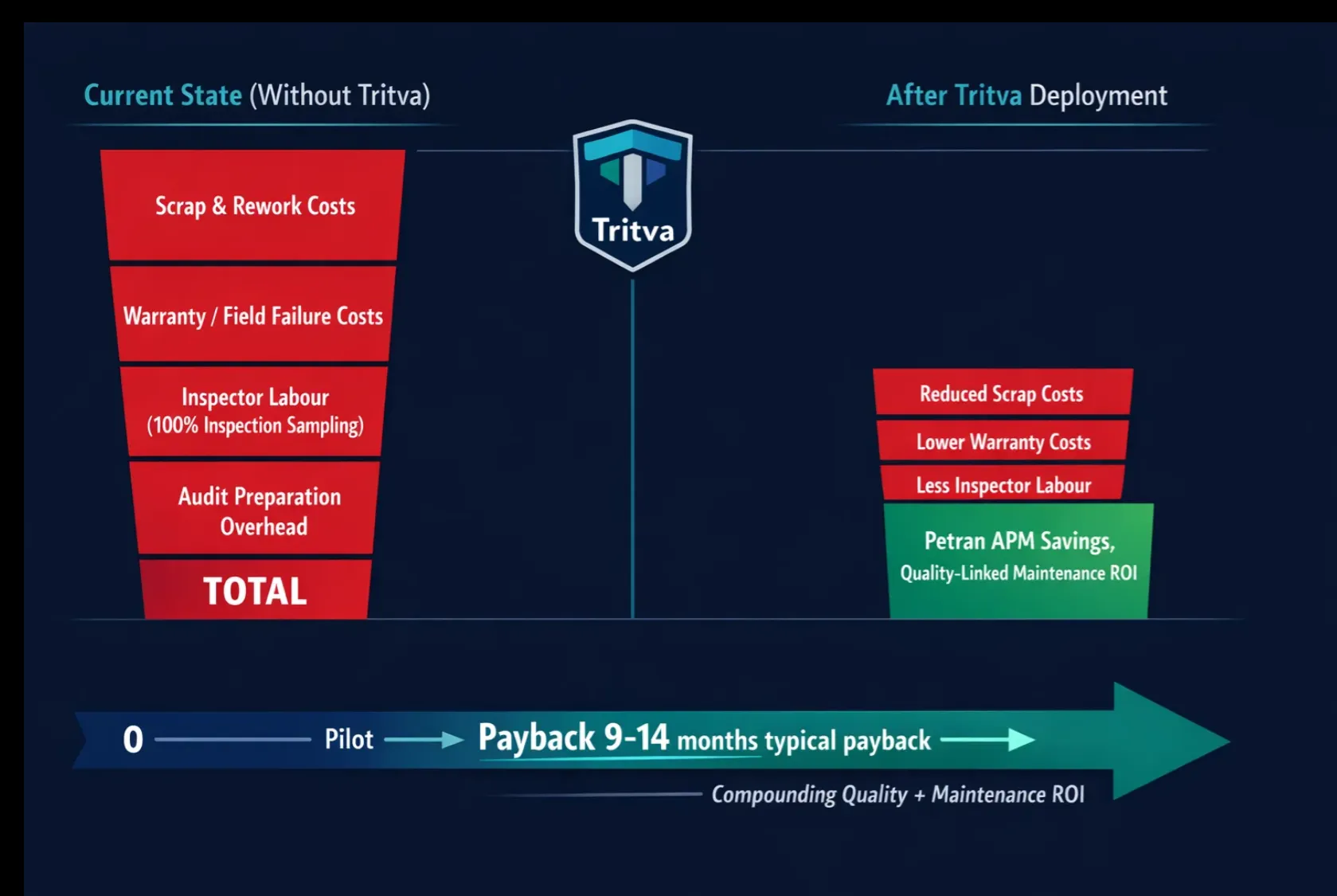

AI Visual Inspection ROI: How to Build the Business Case

AI visual inspection is one of the few Industry 4.0 investments that can be justified in straightforward financial terms: you quantify the current cost of poor quality (COPQ)scrap, rework, warranty claims, containment costs, inspector labourmodel the impact of improved pre-shipment defect detection, add throughput and OEE benefits, subtract platform and integration costs, and produce payback, NPV, and ROI figures that senior leadership can evaluate against any other capital investment.

The key discipline is accuracy in both directions: do not inflate the AI performance assumptions, and do not ignore the implementation and ongoing operational costs (retraining, MLOps, support). Conservative and aggressive scenarios both need to clear your hurdle rate for the investment to be defensible.

AI Visual Inspection ROI Value Drivers

| Value Driver | Mechanism | Typical Impact | Tritva Context |

|---|---|---|---|

| Scrap and rework cost reduction | AI catches defects at the point of creation, before downstream value is added | 20–50% reduction | Defect caught at press saves full machining + assembly cost on that part |

| Defect escape / warranty cost | Higher pre-shipment catch rate reduces field escapes and warranty claims | 60–90% reduction | Tritva automotive: 75% cut in field failures from assembly errors |

| Inspection labour redeployment | Automated 100% inspection replaces manual sampling | 15–30 FTE equivalents | Tritva deployment: 28 FTE redeployed; no involuntary redundancies |

| Throughput / OEE improvement | Eliminates inspection bottlenecks; faster defect feedback accelerates root cause | 5–15% OEE uplift | Inline inspection at line speed vs. offline batch inspection |

| Audit and compliance cost | Automated traceability logging eliminates manual record-keeping | 60–80% time saving | Tritva pharma: FDA audit prep 3 weeks → 4 days |

| Predictive maintenance trigger | Defect pattern data from Tritva feeds Petran APM | Compounding ROI | Tritva + Petran: $420K unplanned press stoppage avoided per automotive case |

ROI Calculation Starting Point

Step 1: Baseline COPQ(monthly output volume × defect rate) × (scrap/rework cost per defect) + (escape rate × warranty/return cost per escape) + annual inspector labour cost. Step 2: AI deltamodel the improvement in pre-shipment catch rate (typically +25–40 percentage points), the reduction in escapes (typically 60–90%), and the inspector redeployment value. Step 3: Subtract platform cost (licence + hardware + integration + MLOps). Step 4: Calculate payback, NPV at your hurdle rate, and IRR. Run conservative (AI catches 25% more), base (40% more), and aggressive (55% more) scenarios. If conservative still clears hurdle rate, proceed.

Implementing AI Visual Inspection: What Actually Works on Factory Floors

Implementation quality determines whether your [AI visual inspection](/solutions/ai-visual-inspection) deployment delivers the projected ROI or becomes an expensive system that operators work around. The following guidance reflects the failure modes seen most frequently in deployments that underperform, and the practices that characterise deployments that succeed.

Step 1: Pre-Deployment AssessmentDefine Before You Build

The most common cause of underperforming AI inspection deployments is an insufficiently specific use case definition. 'Detect defects on Part X' is not a specification. 'Detect surface scratches deeper than 0.3mm and assembly errors where Fastener Y is missing' is a specification. Before purchasing hardware or writing a single line of code, document: the exact defect types to be detected; the acceptance criteria per defect class (at what severity level is a part rejected?); the line speed and resulting image capture window per part; the current defect rate and escape rate; and the COPQ baseline you are trying to reduce.

The Specification Test: If two quality engineers would disagree on whether a given part should be rejected, your defect specification is not complete. AI models cannot be more consistent than the human consensus on what constitutes a defect. Resolving borderline cases explicitlydeciding the rule, not just observing the disagreementis the most valuable pre-deployment investment you can make.

Step 2: Imaging ConfigurationThis Determines 80% of Your Outcome

Camera selection, positioning, and lighting are the most consequential engineering decisions in an AI visual inspection deployment. Poor imaging defeats even the most sophisticated AI model. An algorithm cannot detect what the camera cannot seeand defects that appear clearly under one lighting condition may be invisible under another.

- - Camera resolution and speedResolution must be sufficient to capture the smallest defect at the highest line speed without motion blur. Calculate required pixel density per defect at the part's maximum velocity. For fast lines, consider pulsed strobing to freeze motion.

- - Lighting type by defect classDiffuse lighting for general surface inspection. Directional/raking lighting for scratches and pits. Backlighting for translucent materials. Coaxial lighting for specular surfaces. Thermal imaging for subsurface defects.

- - Camera angle and coveragePosition to see defect-prone surfaces clearly. For 3D parts, multiple camera angles may be required. Ensure the inspection window is consistent across all part variants.

- - Enclosure and vibration controlIndustrial enclosures protect cameras from dust, coolant, and vibration. Vibration from nearby presses or conveyors degrades image qualitymounting isolation matters, particularly for high-resolution applications.

Test imaging performance under real production conditions before training any model. Capture 100+ images at actual line speed, under actual production lighting, with actual production partsincluding parts from different material batches and process settings. If images look poor before training, no model will compensate.

Step 3: Production Line Integration

The inspection system needs three integration points: trigger signals (a PLC or proximity sensor tells the camera when to capturetiming must be precise enough to capture the part correctly positioned); reject mechanisms (an automated diverter or operator alert must be able to act on the AI decision before the non-conforming part moves downstream); and data connections (defect records must flow into your MES, SCADA, or QMS for traceability, reporting, and SPC purposes).

Integration quality often determines whether a deployment scales successfully beyond the pilot station. Systems that require manual data re-entry from the inspection station into the QMS, or that cannot trigger reject mechanisms reliably, create operational workarounds that erode the value of the AI decision. Tritva integrates via standard industrial protocolsOPC-UA, Modbus, Ethernet/IP, REST APIwith documented integration patterns for common PLC and MES platforms.

Step 4: Operator Training and Trust Building

The technical challenge of deploying AI visual inspection is easier than the human challenge. Operators who have been making inspection decisions for years are being asked to trust a system that cannot explain its decisions in plain languageit simply says 'reject.' Building trust requires demonstrating reliability, not asserting it.

The most effective trust-building approach is shadow mode deployment: run the AI system in parallel with existing inspection for 4–6 weeks, displaying AI decisions to operators without acting on them. When operators observe the AI correctly catching defects that they also would have caughtand correctly passing parts that are genuinely acceptabletrust builds organically. Override logging during shadow mode also reveals cases where operator and AI disagree, which should trigger root cause analysis rather than automatic assumption of operator or AI error.

The Override Logging Insight: When an operator overrides an AI decisionaccepting a part the AI rejected, or rejecting a part the AI acceptedthat override is the most valuable data point in your quality system. Systematically reviewing overrides reveals: defect classes where the model needs retraining, lighting conditions that produce inconsistent images, and emerging defect types not in the original training data. Tritva logs every override with the image, decision, and override rationale.

Building Training Datasets That Produce Reliable Models

The AI model is only as good as the data used to train it. This is the most direct statement in AI visual inspectionand the most frequently underestimated challenge in deployments that underperform.

What 'Enough' Training Data Actually Means

There is no universal answer to 'how many images do I need?'it depends on defect class complexity, visual similarity between defect types, variability within the acceptable product range, and whether you are training from scratch or using transfer learning. Practical guidance: for transfer learning on a well-defined defect class with clear visual signatures, 50–150 labelled defect examples per class is often sufficient for a working initial model. For complex or visually subtle defects with high within-class variability, you may need 300–800 examples per class to achieve reliable precision and recall.

More important than absolute defect count is coverage of variation: the training data must include images from multiple material batches, multiple shifts, multiple lighting conditions within the acceptable range, and defects at different severity levels within the reject zone. A model trained on perfect, consistent images under ideal conditions will degrade rapidly in production.

Data Collection Strategies

- - Production collectionCapture images from live production lines using the actual inspection camera and lighting configuration. Label defect images as they occur. This produces the most realistic training data but is slow to build defect examples in well-controlled operations where defects are rare.

- - Staged defect samplesDeliberately create or procure defective parts to label. Faster than waiting for production defects. Risk: staged defects may not fully represent the natural variation in defect appearance from real production failures.

- - Hybrid approach (recommended)Begin with staged samples to build initial model coverage. Deploy in shadow mode to collect real production defect images. Retrain quarterly with a growing production dataset. This produces the fastest initial deployment with systematic improvement over time.

- - Data augmentationApply rotation, flipping, brightness/contrast variation, and cropping to existing labelled images to artificially expand dataset sizeparticularly useful for rare defect classes where production examples are scarce.

Labelling Best Practices

Labelling consistency beats labelling speed. Inconsistently labelled training datawhere the same defect is labelled 'Minor scratch' by one engineer and 'Major scratch' by another, or where borderline parts are labelled differently across labellersproduces models with erratic precision and recall that cannot be systematically improved. Establish a written labelling guide with example images for each severity level before labelling begins. Use the same labellers throughout a labelling session where possible. Review inter-labeller agreement before incorporating any batch into training.

Continuous Learning and Model Maintenance

AI visual inspection models are not fire-and-forget systems. Models trained on data from Month 1 of production will face data from Month 12when material batches have changed, some process parameters have drifted, a new defect type has emerged, and the product mix has shifted. Without systematic model maintenance, detection performance degrades and the AI system becomes less reliable than the inspectors it replaced.

Tritva tracks model performance per defect class continuouslydetecting statistical shifts in confidence score distributions, precision/recall trends, and override rate changes that indicate model drift. When drift is detected, Tritva flags the relevant defect class for retraining and presents the operator override images from that period as candidate training data. The retraining workflow runs inside Tritva Vision, managed by quality engineers without data science involvement.

AI Visual Inspection by Industry: Specific Applications and Tritva Results

Automotive Manufacturing

High-volume production with tight tolerances where quality directly impacts safety, warranty costs, and OEM customer PPM requirements. Tritva handles: paint surface defects (scratches, runs, orange peel, fish-eyes) at full line speed across body and paint shop; weld bead quality (porosity, undercut, discontinuities, spatter) on structural welds; and assembly verification (missing clips, incorrect fastener torque markers, harness routing, label presence) across body-in-white and trim lines. Tritva deployment results: 60% reduction in customer paint complaints; 75% cut in field failures from assembly errors; defect escape rate from 0.8% to 0.06%.

Electronics Manufacturing

Miniaturised components and dense assemblies require inspection at component-level precision, often with defects measured in fractions of a millimetre. Tritva inspects: solder joint quality (bridging, insufficient solder, voids, cold joints) on SMT lines; component placement and orientation (missing components, incorrect polarity, tombstoning) immediately after pick-and-place; connector and pin integrity; and final PCB assembly completeness. AI inspection at 100% coverage replaces the sampling-based approach that leaves escape risk across the uninspected 90% of production volume.

Pharmaceutical and Healthcare

Regulated environments demand zero-defect packaging, batch-level traceability, and compliance documentation. Manual inspection is both error-prone and expensive in cleanroom environments. Tritva inspects: vial and ampoule integrity (cracks, chips, particulate contamination); fill level and cap/stopper presence and seating; label completeness, batch code legibility, and serialisation validation. Full traceability of every inspection decisionimage, timestamp, AI decision, operator dispositionis logged automatically and available for FDA and EMA audit in Tritva's compliance dashboard.

Solar Panel Manufacturing

Small defectsmicrocracks invisible to the naked eye, busbar soldering inconsistenciescause significant efficiency loss and long-term field reliability failures. Tritva inspects solar cells for: microcracks and broken fingers (using electroluminescence imaging); busbar and ribbon soldering defects; tabbing misalignment; and module-level inspection for lamination bubbles, backsheet damage, and edge chipping. Catching microcracks before lamination avoids downstream rework costs that can exceed the value of the cell.

Textile Manufacturing

Large-area materials inspected at production speeds make manual inspection slow, inconsistent, and expensive. AI handles the scale challenge by inspecting the full fabric surface continuously. Tritva detects: holes, tears, stains, and weft/warp defects; pattern repeat misalignment and seam quality; and shade variation across fabric rolls for accurate grading and rejection classification. Automated fabric grading replaces subjective visual grading with objective, reproducible defect-based classification.

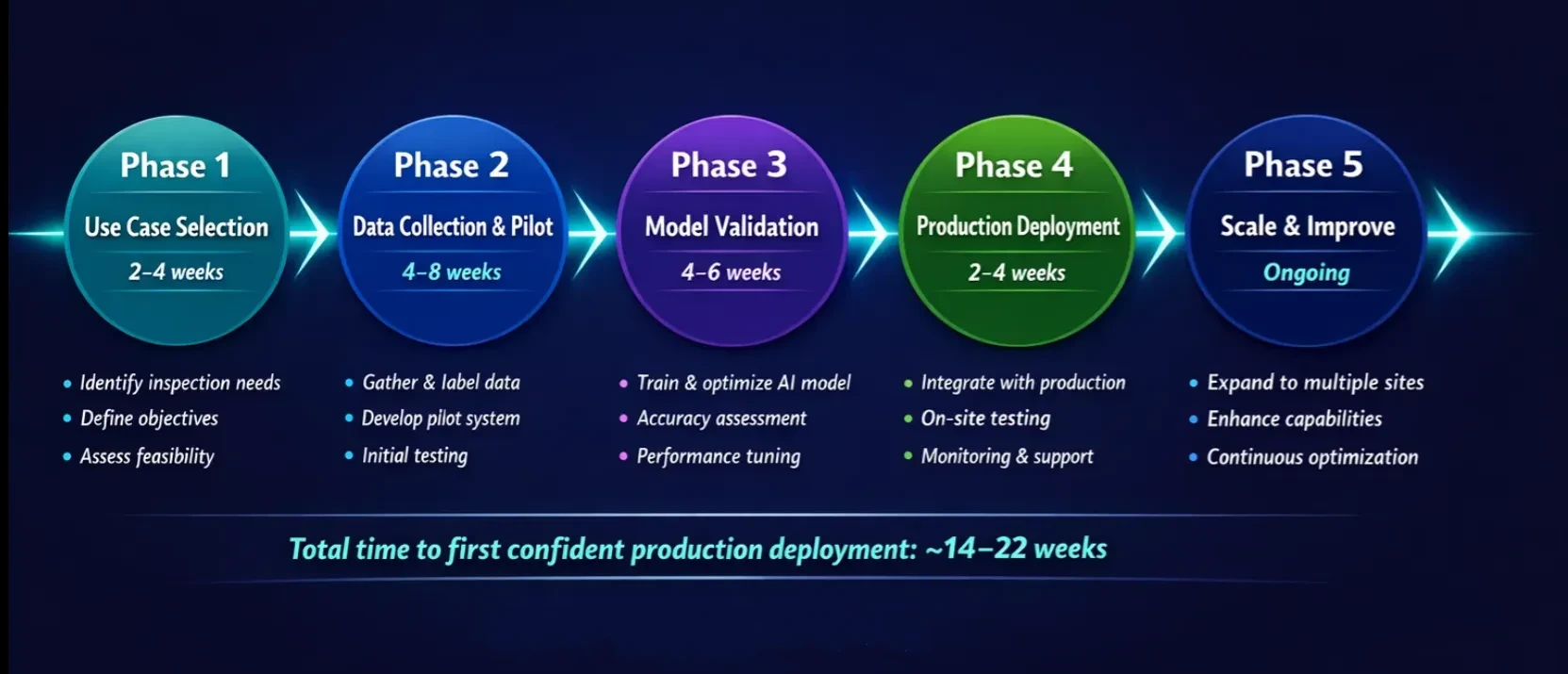

AI Visual Inspection Implementation Roadmap: 5 Phases from Use Case to Enterprise Scale

The following roadmap reflects how successful AI visual inspection deployments are structuredfrom initial use case selection to multi-site enterprise deployment.

Implementation Roadmap

| Phase | Timeline | What Happens | Tritva Delivers |

|---|---|---|---|

| Phase 1: Use Case Selection | 2–4 weeks | Rank quality pain points by financial impact. Identify highest-cost inspection point. Assess feasibility. Document current defect rate and COPQ baseline. | Ombrulla scoping engineers assess your lineproducing a feasibility report, camera placement recommendation, and ROI projection before any hardware is purchased. |

| Phase 2: Data Collection and Pilot Setup | 4–8 weeks | Install cameras and lighting. Capture images at actual line speed. Label defect images using Tritva Vision. Train initial model. Establish shadow mode. | Tritva Vision annotation interface: quality engineers label images directlyno data science team required. |

| Phase 3: Model Validation | 4–6 weeks | Run in shadow mode. Compare AI vs operator decisions on every part. Measure precision, recall, false reject rate. Tune confidence thresholds. | Tritva pilot dashboard shows real-time prediction accuracyprecision, recall, false reject rate per defect classupdated daily. |

| Phase 4: Production Deployment | 2–4 weeks | Switch from shadow to active mode. Flagged parts go to operator verification queue. Monitor closely for first 2–4 weeks. Update operator SOPs. | Tritva operator interface designed for production floor usesimple, fast disposition workflow with override logging. |

| Phase 5: Scale and Improve | Ongoing | Expand to next inspection point. Roll out to additional lines and sites. Retrain models periodically. Activate Petran APM integration. | Tritva model performance monitoring flags drift automatically. Central console deploys model updates to all sites simultaneously. |

What to Avoid in Implementation

- - Starting with the hardest use caseThe use case with the most subtle defects and complex lighting is not where to start. Start where success is achievable and financially significant. A reliable model on a well-defined use case builds organisational confidence for more complex applications.

- - Underestimating integration workThe AI model is 30% of the implementation. Camera hardware, lighting, trigger signals, reject mechanisms, and MES/QMS data integration are the other 70%. Projects that allocate time only to the AI model consistently run over schedule.

- - Skipping operator trainingA technically excellent model deployed without operator buy-in will be systematically bypassed. Operators who distrust the system find workarounds that render the AI ineffective. Invest in shadow mode and override review sessions.

- - Not planning model maintenanceNo model maintenance plan means degrading performance over 6–12 months as production conditions evolve. Define the retraining cadence, the performance triggers for retraining, and who is responsible before deployment.

Measuring AI Visual Inspection Success: The Three-Layer KPI Scorecard

AI visual inspection success should be measured against business outcomesnot model accuracy. A model that achieves 97% accuracy but runs at 50% coverage has not delivered the promised value.

Three-Layer KPI Scorecard

| KPI Layer | Metrics to Track | Cadence | Target Direction |

|---|---|---|---|

| Executive Outcomes | Customer PPM / escaped defects · COPQ · FPY / RTY · Scrap and rework cost · Payback / NPV / IRR vs plan | Monthly / Quarterly | Defect PPM ↓ · COPQ ↓ · FPY ↑ · ROI ↑ |

| Operational Health | Inspection coverage (% units inspected) · Takt time compliance · System uptime + MTTR · Override rate · False reject operational burden | Weekly | Coverage 100% · Latency < takt · Uptime > 99% · Overrides ↓ |

| Inspection Performance | Defect capture rate pre-shipment · CTQ miss rate · False reject rate + cost · Precision / recall by defect class · Model drift indicators | Weekly / Biweekly | Capture rate ↑ · Miss rate ↓ · FRR ↓ · Drift detected early |

The Most Important Executive KPI: Customer PPM (escaped defects per million units shipped) and COPQ (total cost of qualityscrap + rework + warranty + containment costs combined) are the two metrics that translate AI inspection performance into language that procurement, operations leadership, and the board understand. If Customer PPM is declining and COPQ is declining, the system is working. If model accuracy is improving but neither metric moves, the deployment has a scope, integration, or coverage problem that requires investigation.

How to Choose the Right AI Visual Inspection Platform: A Buyer's Framework

Choosing the right AI visual inspection solution is less about which vendor has the best AI and more about fit-for-production: where inference happens, how cleanly the platform integrates with your automation stack, whether it handles your specific production variability, and what it actually costs to run and maintain over 3–5 years.

Platform Selection Framework

| Decision Area | What It Means | What to Ask / Verify | Tritva Position |

|---|---|---|---|

| Architecture (Edge vs Cloud) | Where does AI inference happen and where does data live? | Can it run offline? What is inference latency vs takt time? What data goes to cloud? | Tritva runs edge inference at the station (sub-100ms) with cloud model management. Works fully offline for air-gapped lines. |

| Integration Capability | Can it connect to your existing automation stack? | Supported protocols: OPC-UA, Modbus, Ethernet/IP, REST API. Does it write defect codes into your MES/QMS? | Tritva integrates via standard industrial protocolstested with modern and legacy PLC/SCADA systems. |

| Customisation vs Off-the-Shelf | Does it handle your actual defects, materials, and variability? | How does it handle multiple defect classes? Evolving CTQs? Show validation data. | Tritva Vision trains custom models from your production images. Not a pre-trained generic model. |

| Vendor Evaluation | Is the vendor capable and reliable long-term? | Verify manufacturing deployments in your sector. SLA and support response times. Product roadmap. | Ombrulla operates across manufacturing, oil and gas, pharma, solar, and infrastructure. |

| POC Best Practices | How should you validate before full deployment? | Test with real parts, real line conditions, real variation. Define success criteria upfront. | Tritva pilots typically run 4–8 weeks in shadow mode with parallel performance dashboards. |

| Total Cost of Ownership | What does it actually cost to run over 3–5 years? | Include: hardware, software licence, integration, compute, retraining, support, calibration, spares. | Tritva consolidates multiple stations on a single platform licencereducing per-station cost at scale. |

The POC Design Principle: Short POCs run under ideal conditionsfreshly prepared sample parts, controlled lighting, stable process settingsconsistently overestimate production performance. A POC that does not include variation across shifts, material batches, and process conditions does not predict how the system will perform after 6 months. Design your POC to be uncomfortable: use real production parts, real lighting, real line speed, and real process variation. If the system performs well under those conditions, it will perform well in production.

Beyond Inspection: The Tritva + Petran Closed-Loop Quality-Maintenance System

Most AI visual inspection deployments stop at the inspection decision. Defects are detected, parts are rejected or accepted, and the quality record is logged. The next stepusing the pattern of what defects are appearing, on which lines, at what frequency, to understand the equipment condition that is causing themrequires connecting the inspection system to the predictive maintenance system.

Tritva and Petran are Ombrulla's two core industrial AI platformsAI Visual Inspection and Asset Performance Management respectively. They integrate natively. When Tritva detects a spike in surface scratch frequency on a stamping line, that defect pattern data feeds automatically into Petran's predictive maintenance models for the press producing those parts. Petran correlates the visual quality signal with vibration and temperature sensor data from the press, identifies the bearing degradation that is causing the dimensional variation that is causing the scratches, and schedules proactive bearing maintenance during the next planned window.

The result: the quality problem is resolved at its mechanical root causenot treated as a quality exception to be managed indefinitely. And the press failure that would have caused a multi-hour unplanned stoppage is prevented. The combined ROI of Tritva + Petran consistently exceeds the sum of their individual ROI projections, because the closed loop generates value streamsquality improvement and maintenance cost reductionthat are compounding rather than parallel.

The Tritva-Petran integration is nativeno middleware, no custom API, no separate integration project. Both platforms are available through Ombrulla.

Frequently Asked Questions: AI Visual Inspection in Manufacturing

What is the difference between AI visual inspection and traditional AOI?

Traditional AOI uses rule-based logicprogrammed thresholds that define specific defect geometries (e.g. 'reject if scratch exceeds 2mm'). Rules work for simple, stable applications but require manual reprogramming for each product variant. AI visual inspection learns from labelled examples rather than rulesit can handle complex, subtle, and multi-class defects without explicit programming, adapts to production variability, and improves over time with new training data.

How accurate is AI visual inspection compared to manual inspection?

AI visual inspection consistently achieves 99%+ defect detection accuracy on well-trained defect classes with quality imagingcompared to 70–85% for manual inspection under typical production conditions (Deloitte visual inspection benchmark, McKinsey Manufacturing Lighthouses report). The accuracy gap widens during second and third shifts, at high line speeds, and for subtle cosmetic defects. The more important comparison is consistency: AI applies identical criteria to every part at every speed at every hour of the day.

How much training data does AI visual inspection require?

For transfer learning deploymentsthe standard approach in 202650–150 labelled defect images per class is often sufficient for a working initial model on a well-defined defect. Complex defect classes may require 300–800 examples. More important than total count is variation coverage: images across material batches, shifts, lighting conditions, and defect severity levels. A model trained on 1,000 representative images outperforms one trained on 5,000 images from a single day.

What is the typical ROI payback period for AI visual inspection?

Most mid-to-large manufacturing deployments achieve payback within 9–18 months. Primary ROI drivers are scrap/rework cost reduction (AI catches defects before downstream value is added), defect escape reduction (typically 60–90% improvement), and inspection labour redeployment. Automotive, electronics, and pharmaceutical deployments achieve the fastest paybacksome within 6–9 months when a single recall event is prevented.

What is Tritva and how does it differ from other AI inspection platforms?

Tritva is Ombrulla's purpose-built AI visual inspection platform for industrial manufacturing. Three differentiators: (1) Tritva Visionmodel training and management designed for quality engineers, not data scientists; (2) Native Petran integrationclosed-loop quality-to-maintenance intelligence; (3) Enterprise multi-site architecturesingle deployment manages inspection across all lines and facilities with central model management and compliance reporting.

Can AI visual inspection handle multiple product variants on the same line?

Yesthis is a core advantage over rule-based AOI. Tritva supports multi-SKU production lines by training separate model configurations per product type and automatically loading the correct configuration when a changeover signal is received from the PLC or MES. Model switching happens in milliseconds and requires no operator intervention beyond the normal changeover sequence.

How does AI visual inspection integrate with existing MES and ERP systems?

Tritva integrates with MES, SCADA, ERP/QMS, and PLC systems via standard industrial protocols: OPC-UA, Modbus, Ethernet/IP, and REST API. Defect recordsincluding image, defect class, severity, confidence score, and dispositionare written to the MES/QMS automatically. Tritva has documented integration patterns for common manufacturing SCADA and MES platforms.

Conclusion: AI Visual Inspection WorksThe Question Is How to Deploy It Well

AI visual inspection is production-proven technology in 2026. It reliably catches defects that manual inspection misses, maintains consistent quality standards across every shift and every operator, and generates the quality data that drives systematic process improvement. The debate is not whether it worksit is how to deploy it in a way that delivers the projected ROI rather than becoming another underutilised quality technology investment.

The operational principles that determine deployment success are consistent across industries: specify the use case precisely before purchasing hardware; invest in imaging quality as the highest-leverage engineering decision; build training datasets that represent the full range of production variation, not just ideal conditions; deploy in shadow mode to build operator trust; plan for model maintenance from day one; and integrate with your existing systems so the AI decision flows into your quality record without manual re-entry.

Start with your biggest pain pointthe inspection application where defect escapes or scrap costs are highest and most visible to senior leadership. Prove the business case on that application. Then scale. Tritva is built for this path: single station to multi-line to multi-site, from a single platform with central model management and a compliance record that grows with your deployment.

The technology is ready. The ROI is documented. The question is choosing the right application, the right imaging configuration, and the right platform for your operationand executing the deployment with the discipline that separates systems that deliver from systems that disappoint.

Ombrulla's AI inspection team will review your production line, identify the highest-value inspection point, and demonstrate Tritva detecting defects on your actual partsbefore you commit to hardware.

About Ombrulla

Ombrulla builds AI-powered visual inspection, asset performance management, and predictive maintenance solutions for manufacturing, oil and gas, infrastructure, and utilities. Tritva, Ombrulla's AI Visual Inspection platform, is deployed across automotive, electronics, pharmaceutical, solar, and textile manufacturing globally. Petran, Ombrulla's AI + IoT APM platform, integrates natively with Tritva to deliver closed-loop quality-to-maintenance intelligence. Offices in the UK, USA, Germany, and India.