What is AI visual inspection (and how is it different from traditional methods)

AI visual inspection is an automated quality-control method that uses cameras, computer vision, and deep-learning models to detect defects, verify assembly, and grade surfaces in real time. Unlike rule-based machine vision, which uses fixed thresholds ('reject if pixel intensity drops below X'), AI models learn from labeled examples of good and defective parts and generalize to unseen variations. An AI visual inspection system for manufacturing quality control delivers consistent, defensible results across shifts.

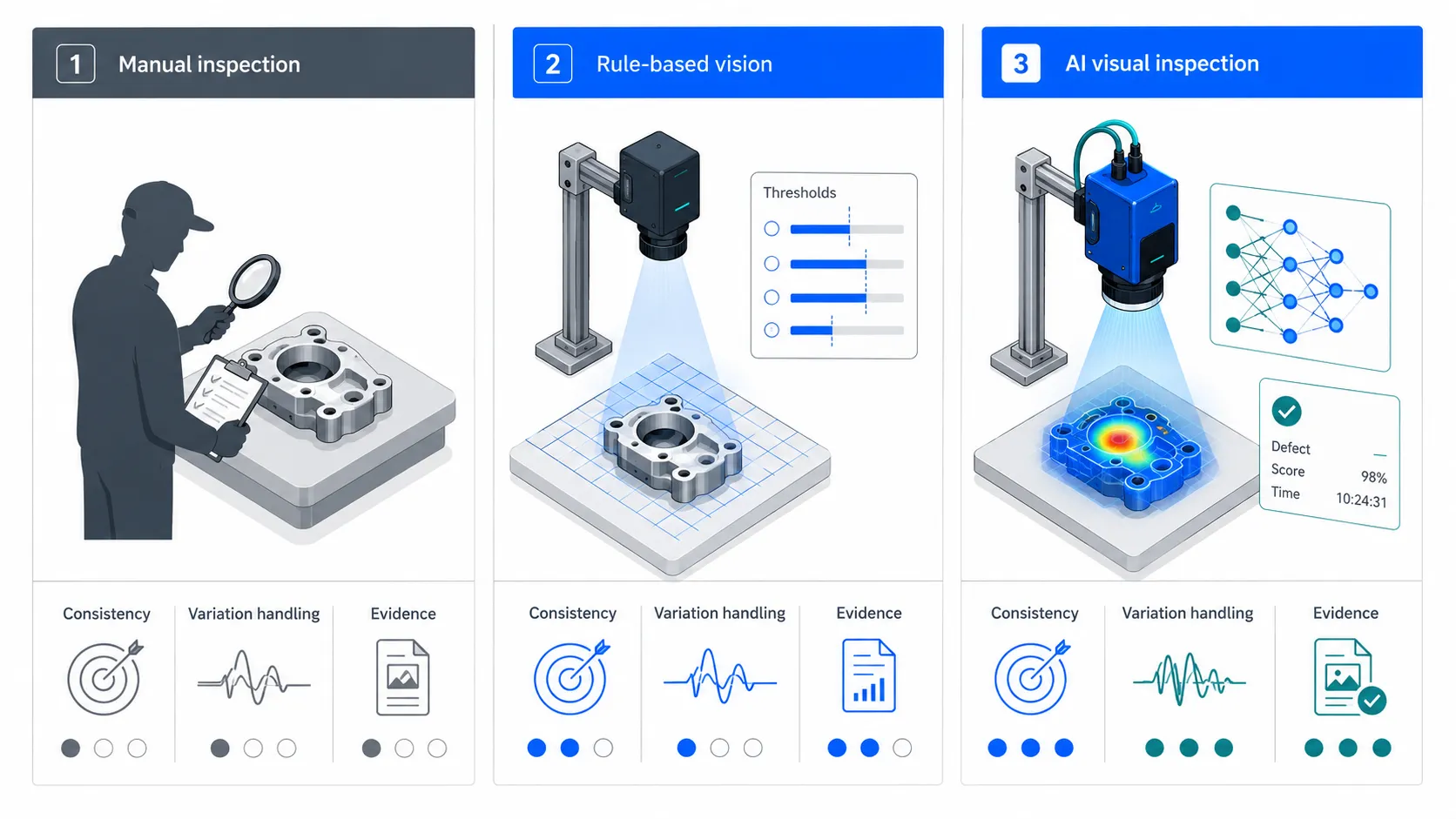

Traditional inspection is an umbrella term for two approaches that pre-date AI:

- Manual inspection: human operators or QA staff visually check parts, often with magnification, gauges, or fixtures. Effective for small batches and subjective grading. Subject to fatigue, training drift, and shift-to-shift variation

- Rule-based machine vision: deterministic image-processing pipelines (thresholding, edge detection, blob analysis, pattern matching) running on industrial cameras. Effective on stable products with consistent lighting. Brittle to variation in surface texture, supplier batches, or environmental conditions

Manual vs rule-based vs AI inspection: the side-by-side comparison

Buyers comparing AI visual inspection vs traditional inspection methods usually need to make one of three calls: replace manual at a critical station, replace a rule-based system that keeps drifting, or add inspection to a station that has none. The matrix below answers all three. TRITVA AI visual inspection platform represents the state-of-the-art in edge-based inspection technology.

| Criterion | Manual inspection | Rule-based machine vision | AI visual inspection |

|---|---|---|---|

| Consistency across shifts | Low - drifts with fatigue | Medium - stable until conditions change | High - same model, same standard |

| Tolerance to variation | Medium - humans adapt slowly | Low - needs retuning | Medium to high - generalizes from data |

| Throughput | Limited by operator speed | Fast (5–50 ms) | Fast (50–200 ms on edge) |

| Defect coverage | Subjective + obvious | Pre-defined defects only | Known + novel via anomaly detection |

| Changeover effort | Low | High - re-tune per SKU | Medium - retrain or fine-tune |

| Audit / evidence trail | Paper or spreadsheet | Pass/fail logs | Image + metadata per part |

| Capex | Low | Medium | Medium to high |

| Opex | High (labor) | Low | Low to medium |

| Best fit | Prototypes, low volume, cosmetic | Simple, stable, high-contrast | Mixed SKUs, subtle defects, regulated |

Where AI wins: accuracy, consistency, and audit evidence

Accuracy on subtle and variable defects

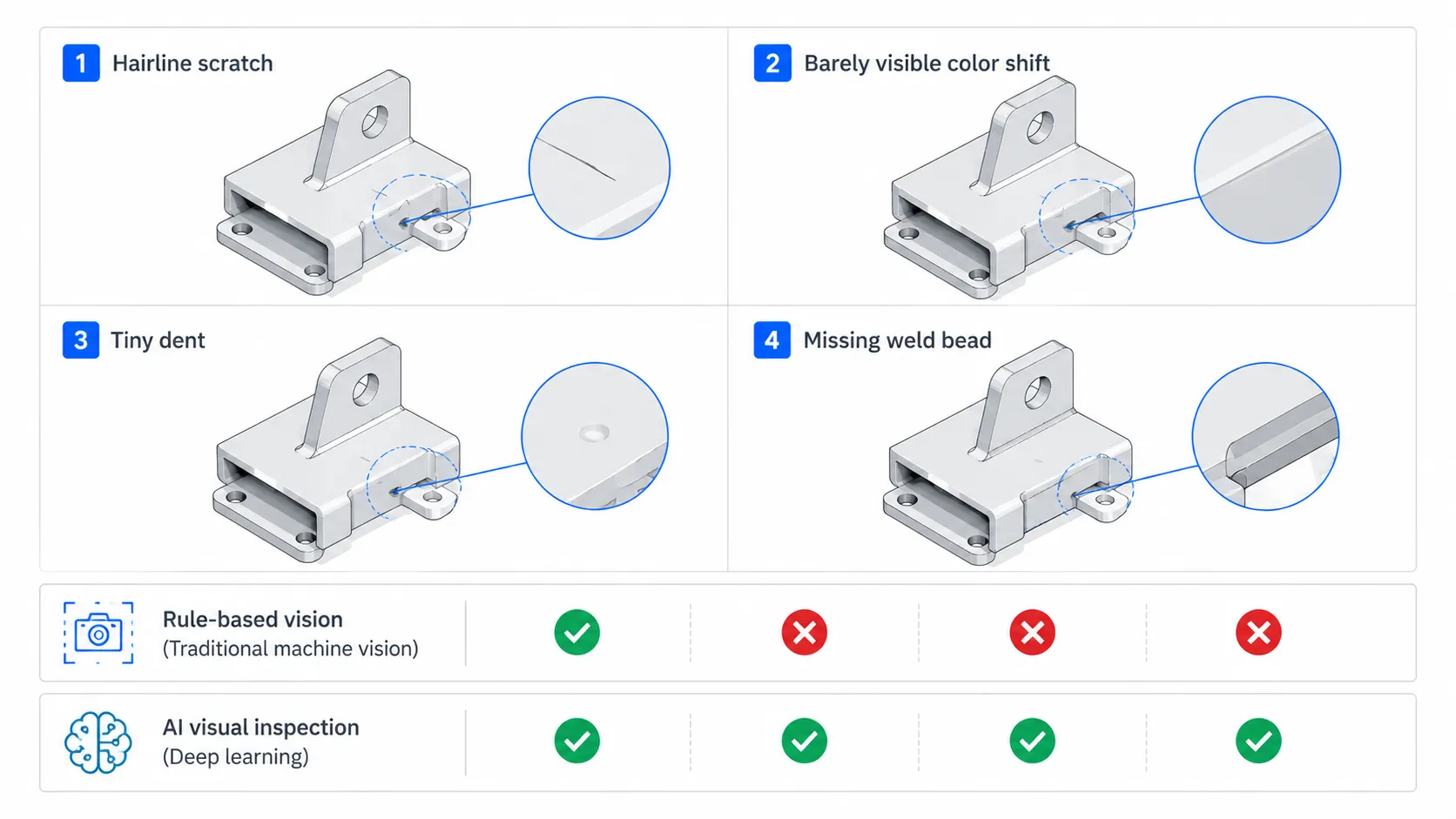

Rule-based vision excels at presence/absence checks (a screw is either there or not). It struggles when the defect is a faint scratch, a slight color shift, or a missing weld bead in a region that varies part to part. AI models trained on hundreds to thousands of examples learn the joint distribution of normal variation and defects, which is why they catch borderline cases that fixed thresholds miss. AI solutions for manufacturing operations leverage this capability to deliver superior results.

0.8 sec

LG inspected 200 images in 0.8 seconds in a Google Cloud edge-AI manufacturing case study, achieving 99.9% accuracy and reporting USD 20M in annual savings.

Consistency across shifts

A human inspector at hour 7 of a shift is not the same inspector as at hour 1. AI applies the same model parameters at minute 1 and minute 480. For multi-shift plants, this is the single largest driver of measurable improvement: customer claims fall because the standard does not drift.

Defensible audit trail

Every AI inspection decision can be stored as: (a) the input image, (b) the model version, (c) the prediction with confidence score, (d) the timestamp, (e) the station ID, and (f) the operator on shift. When a customer files a complaint or an auditor asks for evidence, you have the exact frame the model evaluated and the decision logic. Manual inspection cannot match this.

Anomaly detection for unknown defects

Modern AI inspection systems do not only classify known defect types. Unsupervised or self-supervised anomaly-detection models flag any part that deviates statistically from the 'normal' distribution, even when the defect mode has never been seen before. This is critical for new-product introductions and for catching upstream process drift early.

Where traditional methods still win: when not to deploy AI

AI is not the right answer for every station. The mistake we see most often in B2B procurement is treating 'AI everywhere' as the goal. The goal is the simplest method that meets the quality, throughput, and audit requirement of the station. AI predictive maintenance software complements inspection by identifying root causes before they escalate.

When manual inspection is still the right call

- 1. Prototype runs and engineering samples - defect modes are not yet known, so there is no training data

- 2. Very low volume (under ~500 units per shift) - labor cost is low, and AI capex does not amortize

- 3. Subjective cosmetic grading where the customer specification is 'to taste' - luxury goods, hand-finished surfaces, premium textiles

- 4. Stations where a human must already touch the part for assembly or test - adding inspection to that touchpoint is free

When rule-based vision is still the right call

- 1. Stable products with consistent lighting, geometry, and supplier inputs

- 2. Simple binary checks: presence/absence, barcode reading, OCR on labels, fill-level detection

- 3. Hard-coded measurement against a fixed dimensional tolerance with a calibrated camera

- 4. Regulatory environments where the algorithm must be deterministic and auditable line-by-line (some pharma applications)

ROI and total cost of ownership

Most B2B buyers comparing AI visual inspection vs manual inspection ask the same question: how do I justify it? ROI in automated quality control comes from four directly measurable buckets, plus two indirect ones.

The four direct ROI levers:

| Lever | What it looks like on the floor | Typical AI improvement | Where the savings show up |

|---|---|---|---|

| Scrap reduction | Defective parts thrown away after assembly | 20–60% lower scrap on covered defects | Material cost |

| Rework reduction | Borderline parts re-inspected and re-handled | 30–70% fewer false rejects | Labor + cycle time |

| Escape reduction | Defects reaching the customer | 50–90% fewer escapes on covered modes | Warranty + brand |

| Downtime reduction | Stops to investigate, sort, or recheck | 10–30% less inspection-related downtime | OEE |

The two indirect ROI levers

- Process intelligence: defect-pattern data feeds upstream root-cause analysis. Industrial IoT real-time monitoring platform captures sensor and image data to enable predictive insights. Ombrulla customers using Petran for pattern detection typically reduce recurring defects by 25 to 40 percent within two quarters

- Audit and compliance: image evidence per part shortens customer audits, supports IATF 16949 / ISO 9001 evidence requirements, and reduces the cost of warranty disputes

Total cost of ownership: a worked example

Consider a plant inspecting 1.2 million units per year across two shifts, with manual inspection at three stations costing roughly USD 240,000 per year in fully loaded labor. Defect escape rate is 0.4 percent, with each escape costing USD 80 in returns and rework.

| Cost line | Manual baseline (year 1) | AI inspection (year 1) | AI inspection (year 2) |

|---|---|---|---|

| Labor | USD 240,000 | USD 80,000 | USD 80,000 |

| Hardware (cameras + edge) | USD 0 | USD 90,000 | USD 0 |

| Software + licensing | USD 0 | USD 60,000 | USD 60,000 |

| Model training + tuning | USD 0 | USD 40,000 | USD 15,000 |

| Escape cost (returns/rework) | USD 384,000 | USD 96,000 | USD 76,800 |

| Total | USD 624,000 | USD 366,000 | USD 231,800 |

| Net savings vs baseline | - | USD 258,000 | USD 392,200 |

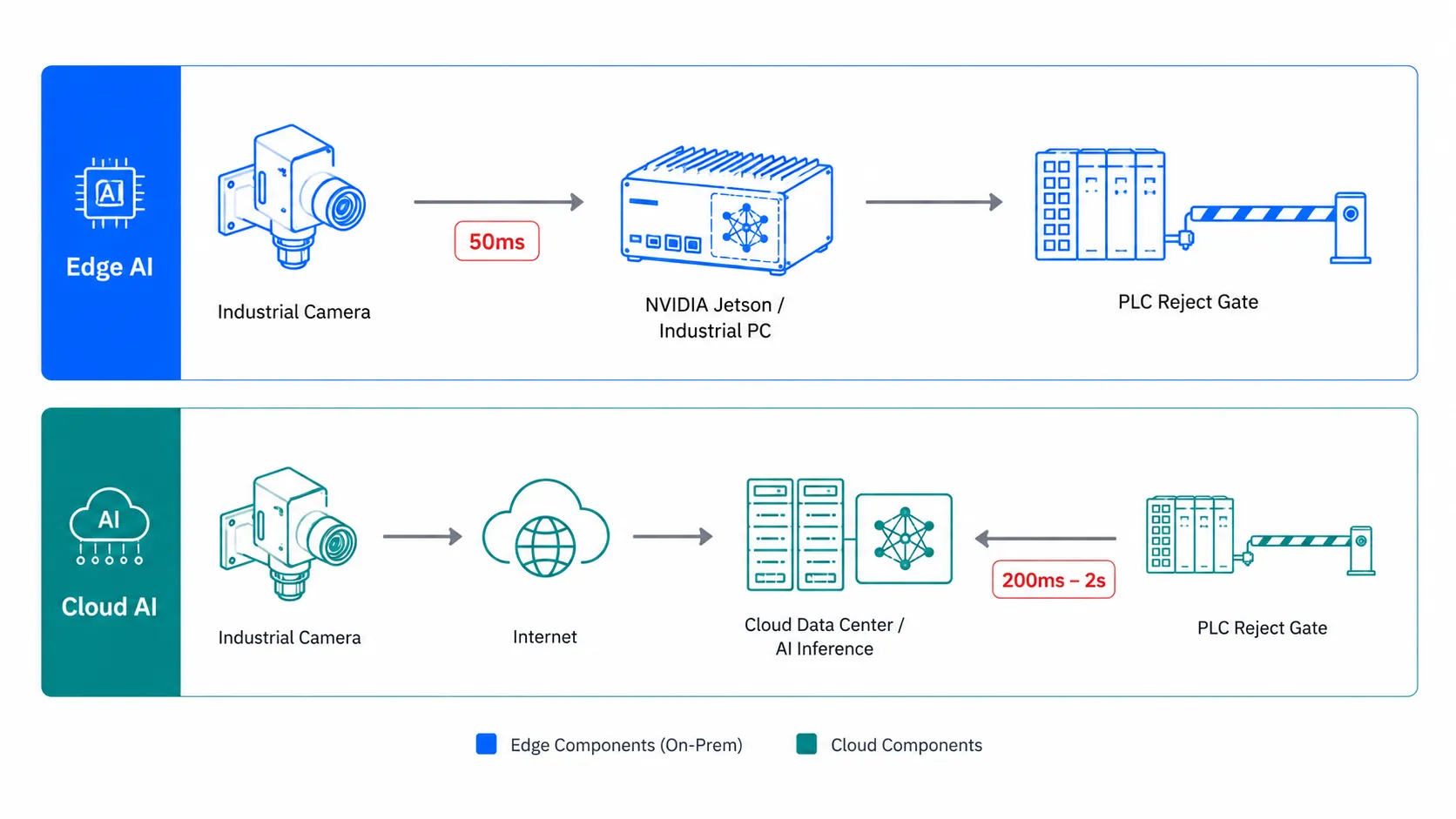

Edge vs cloud: where should the AI run

Edge AI inspection runs the model on a compute device physically near the camera (an industrial PC, a Jetson-class module, or a vision controller). Decisions happen in 50 to 200 milliseconds. Cloud AI inspection sends images to a remote model and receives the result over the network - typically 200 milliseconds to several seconds round-trip. AI asset performance management platforms integrate both edge and cloud data for comprehensive operational intelligence.

Choose edge when

- • Decisions must trigger a PLC reject gate within one cycle time

- • The plant network is unreliable or air-gapped

- • Data residency rules prevent images from leaving the facility

- • Bandwidth cost of streaming high-resolution video to the cloud is prohibitive

Choose cloud when

- • Latency tolerance is several seconds (post-process inspection, packaging, end-of-line audit)

- • You need to consolidate inspection data across many sites for centralized analytics

- • You want easier model updates and A/B testing without touching plant hardware

- • Compute demand is spiky and you do not want to size edge hardware for peak

The hybrid pattern most plants actually deploy

In practice, the most resilient architecture runs inference on the edge for real-time decisions and streams compressed evidence (image, prediction, metadata) to the cloud for retraining, dashboards, and cross-site benchmarking. This is the default pattern for Ombrulla deployments using Tritva at the edge and a cloud-based MLOps loop for continuous improvement.

Industry evidence: how leading manufacturers use AI inspection

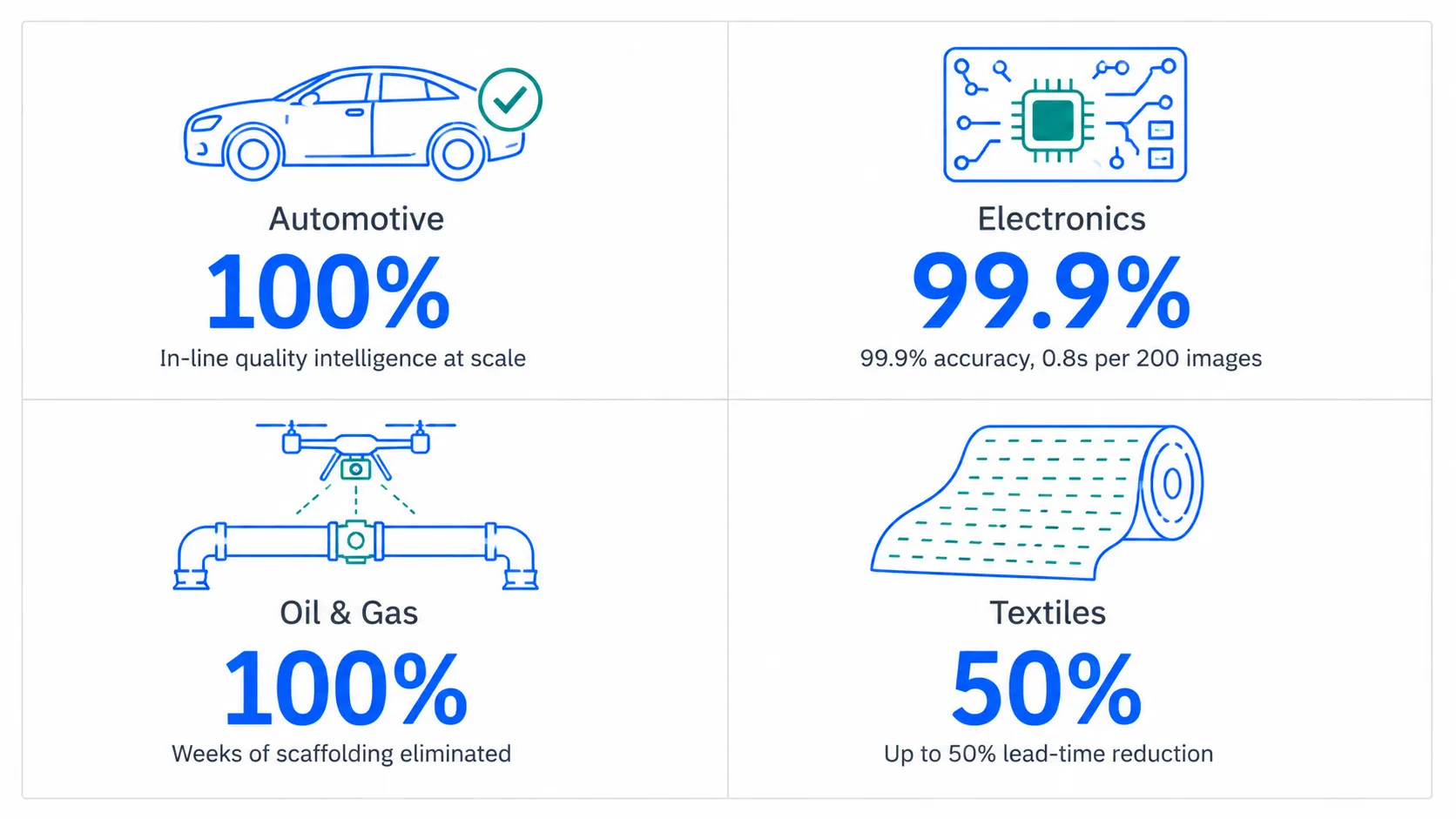

Automotive - BMW Group AIQX

BMW Group describes AIQX (Artificial Intelligence Quality Next) within its iFACTORY program. The system uses cameras and sensors along the production line to perform real-time completeness checks and anomaly detection, with feedback delivered directly to the line operator. AI visual inspection for defect detection and quality control is critical for automotive OEMs. The pattern most automotive OEMs follow is to start at one high-cost-of-escape station - typically a paint, weld, or final-assembly inspection - and expand outward.

Manufacturing - LG with Google Cloud

A Google Cloud manufacturing case study reports that LG inspected 200 images in 0.8 seconds using edge hardware to handle latency and bandwidth constraints. The same case study reports 99.9 percent accuracy and approximately USD 20 million in annual savings. The takeaway: when takt time is tight, edge deployment is not optional.

Oil and gas - Saudi Aramco with Terra Drone

Reuters reported on Terra Drone's collaboration with Saudi Aramco to support drone-based inspection of oil-and-gas assets. The economic logic is direct: a manual inspection of an elevated structure can require weeks of scaffolding setup, with proportional safety risk. Drone-mounted AI vision compresses that to a single shift and produces a complete visual record.

Textiles - automated fabric inspection

Uster has reported that automated fabric inspection, depending on setup, can deliver up to 50 percent lead-time reduction and roughly 80 percent less waste, by combining inspection with usable downstream data. Fabric is a useful canonical case for AI inspection because the defect patterns (broken threads, weave anomalies, color drift) are exactly the subtle, distributed flaws that defeat rule-based vision.

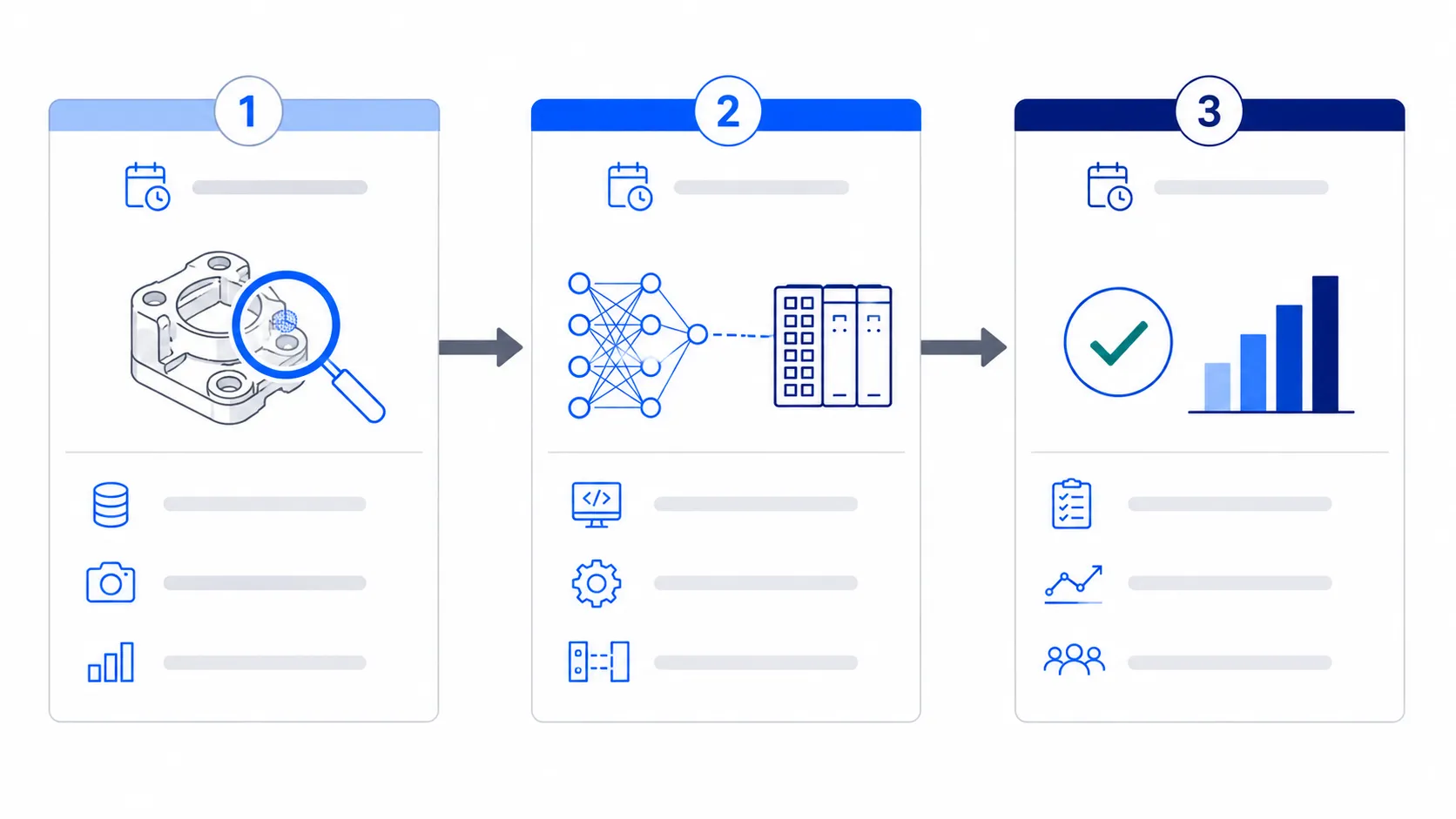

Implementation roadmap: a 90-day pilot blueprint

Do not replace everything at once. The fastest path to defensible ROI is a focused 90-day pilot at one station with one defect family, followed by structured expansion.

Phase 1 - Discovery and data (days 1 to 30)

- Pick the right first station: high cost of escape, repeatable defect, room for cameras

- Define the success metric: false-reject rate, escape rate, rework minutes per shift, or all three

- Capture a baseline data set: 1,000+ images of good parts, 100+ per defect class, from real production conditions (not lab)

- Lock down lighting, camera position, and trigger logic: variability here will dominate model error

- Sign off on the labeling guideline with QA: what counts as a defect must be unambiguous

Phase 2 - Train and integrate (days 31 to 60)

- Train an initial model: For most surface and assembly defects, a fine-tuned CNN or vision transformer reaches usable accuracy within this window

- Validate on a held-out test set: then on live line data with a human-in-the-loop review queue

- Integrate with the PLC for reject signaling: Start with alert-only mode before enabling automatic rejection

- Wire up MES or SCADA reporting: for the metrics you defined in phase 1

- Stand up the evidence store: images, predictions, and metadata indexed per part

Phase 3 - Validate and scale (days 61 to 90)

- Run in production with shadow-mode comparison: to manual or rule-based inspection for at least 10 shifts

- Tune the decision threshold with QA: based on real false-reject and escape data

- Document the learnings and the model card: training data sources, performance bounds, known failure modes

- Decide: scale to additional stations: additional defect classes, or both. AI-driven overall equipment effectiveness optimisation helps prioritize which stations deliver the highest ROI

- Set the retraining cadence: drift monitoring, scheduled refreshes, and a process to add new defect classes

Compliance, data privacy, and integration

Regulated environments

AI inspection in regulated industries requires alignment with the relevant standards. Common ones we see in B2B engagements:

| Standard / framework | Applicability | What it requires from AI inspection |

|---|---|---|

| ISO 9001 / IATF 16949 | Manufacturing, automotive | Documented inspection process, evidence retention, change control |

| FDA 21 CFR Part 11 | Pharma, medical devices (US) | Electronic records integrity, audit trails, e-signatures, validation |

| EU GMP Annex 11 | Pharma in EU | Computerized system validation, data integrity (ALCOA+) |

| GDPR / data residency | EU-based or processing EU data | Lawful basis, data minimization, residency controls |

| IEC 62443 | Industrial cybersecurity | Network segmentation, identity, secure update |

| NIST AI RMF | Cross-industry US | AI risk identification, mapping, measurement, management |

Data privacy and image handling

- • Decide where images live: edge-only retention for highest privacy, on-prem aggregation for site-wide analytics, or cloud for cross-site benchmarking

- • Apply role-based access control. Inspection images can contain process-sensitive information that competitors would value

- • Log every model version and every inference. 'Which model called this part bad?' must always be answerable

- • If humans appear incidentally in inspection frames, mask or redact at capture, not after. AI infrastructure inspection for industrial assets extends these principles to facility and equipment monitoring

Integration: PLC, MES, SCADA, and ERP

- • Decide the action first: reject, alert, hold for review, or just log. Different actions need different integrations

- • Connect inspection result to the PLC for physical actions (gates, diverters, indicators). This is typically a digital I/O signal or an OPC UA event

- • Send pass/fail and metadata to MES (e.g., Siemens Opcenter, Rockwell FactoryTalk, GE Proficy) for genealogy and traceability

- • Surface KPIs in SCADA dashboards or your operations BI tool

- • Push aggregated analytics to ERP (SAP, Oracle, Microsoft Dynamics) for cost-of-quality reporting

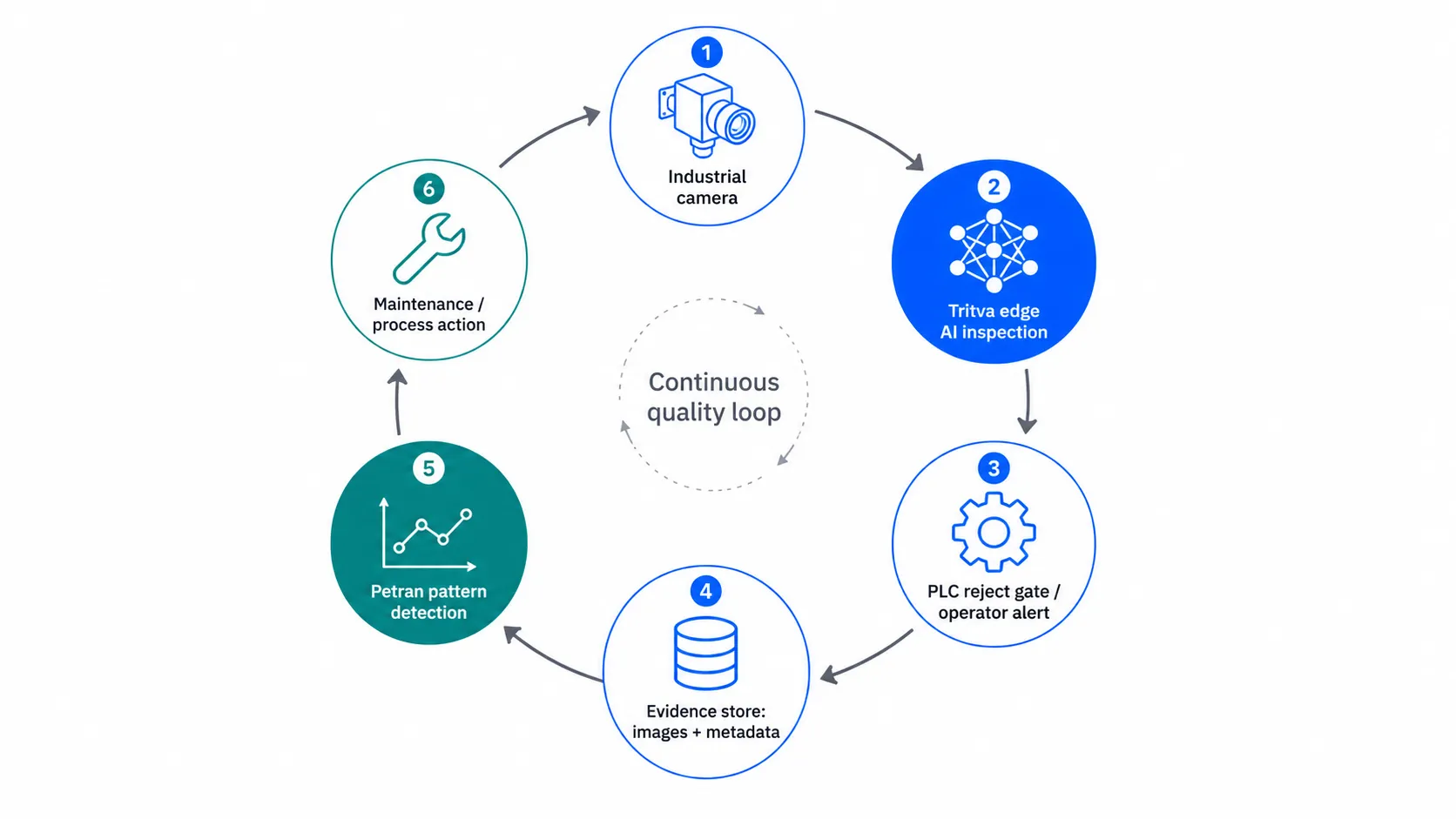

How Ombrulla deploys AI inspection: Tritva and Petran

Ombrulla is an enterprise AI platform vendor focused on industrial inspection and asset performance. Two products do the heavy lifting in a typical deployment:

Tritva - edge AI for real-time inspection

Tritva runs computer-vision inference at the station, with sub-200-millisecond latency on industrial-grade edge hardware. It triggers PLC actions, stores image evidence, and exposes results to MES and SCADA. Tritva is appropriate when the decision must happen inside takt time and the network cannot be on the critical path.

Petran - pattern detection and predictive maintenance

Petran consumes the evidence stream from Tritva (and from existing sensors) and looks for patterns: the same defect mode trending up, a specific machine producing more borderline parts late in the shift, a supplier batch with a different defect signature. Where Tritva answers 'is this part good?', Petran answers 'why are we seeing more bad parts, and what should we fix?'

The closed-loop pattern

Tritva flags a defect, the part is rejected or held, evidence is stored. Petran spots that the defect rate is climbing on machine 3 over the last four hours and triggers a maintenance alert before the next shift. The team fixes the root cause instead of sorting parts shift after shift. This closed loop is where AI visual inspection moves from a quality-control tool to a process-intelligence tool.

Frequently asked questions

Is AI visual inspection better than manual inspection?

For repeatable inspection on production lines, yes - AI is more consistent across shifts, scales without adding headcount, and stores image evidence per part. Manual inspection still wins for prototypes, very low volumes, and subjective cosmetic grading. Most plants run a blend: AI for screening, humans for edge cases and final sign-off.

How accurate is AI visual inspection?

Production AI inspection systems typically achieve 95 to 99.9 percent accuracy on covered defect classes after a proper training and tuning cycle. Reported figures vary by application: the LG / Google Cloud case study cites 99.9 percent at 0.8 seconds per 200 images. Accuracy depends on data quality, lighting and camera control, and the difficulty of the defect - not on the algorithm alone.

What data do you need to start AI visual inspection?

At minimum: 1,000 or more images of good parts and 100 or more per defect class, captured under real production lighting (not a lab), with consistent labeling. You also need a labeling guideline agreed with QA, the station identifier, and a pass/fail decision per image. Imbalanced data - too few defect examples - is the most common failure mode.

How do you reduce false rejects in automated inspection?

Three steps. First, fix lighting and camera stability - variability here dominates model error. Second, define the reject threshold with QA using real false-reject cost versus escape cost. Third, train on real production variation, not perfect samples. Keep a human-review lane for borderline cases until the false-reject rate stabilizes for at least 10 shifts.

Should AI visual inspection run on edge or cloud?

Use edge when decisions must happen in milliseconds, when the network is unreliable, or when data cannot leave the plant. Use cloud when latency tolerance is several seconds, you need centralized analytics, or you want easier model updates. Most production deployments use both: edge for inference, cloud for retraining, dashboards, and cross-site benchmarking.

How do you measure ROI for AI inspection?

Track five numbers: escape rate, false-reject rate, rework minutes per shift, scrap, and inspection-related downtime. Convert each into cost per shift, then compare against the fully loaded cost of the AI deployment (hardware, software, model training, integration). ROI is strongest on multi-shift lines where small per-part savings repeat thousands of times.

How long does AI visual inspection take to deploy?

A focused single-station pilot typically takes 60 to 90 days from data collection to validated production. Multi-station rollouts take three to nine months depending on integration scope (PLC, MES, SCADA), defect complexity, and how much data already exists. The bottleneck is almost never the algorithm - it is data labeling, lighting, and integration.

What are the limitations of AI visual inspection?

AI inspection still requires stable cameras, controlled lighting, and ongoing monitoring as products evolve. Rare defects with very few examples are hard. The system needs retraining when new SKUs, suppliers, or materials are introduced. AI does not replace process control - it shows you what is happening faster and more consistently. Treat it as part of a quality system, not a substitute for one.

How does AI visual inspection integrate with PLC, MES, and SCADA?

Inspection results connect to the PLC via digital I/O or OPC UA for physical actions like reject gates. Pass/fail records and metadata flow to MES (Siemens Opcenter, Rockwell FactoryTalk, GE Proficy) for genealogy. Aggregated KPIs surface in SCADA or BI dashboards. Cost-of-quality data rolls up to ERP. Plan the integration before the pilot, not after.

How is AI inspection different from rule-based machine vision?

Rule-based machine vision uses fixed image-processing rules (thresholding, edge detection, blob analysis) tuned by an engineer. It works on stable, high-contrast checks. AI inspection learns from labeled examples and generalizes to variation in surface, lighting, and supplier inputs. Rule-based vision needs retuning when conditions change. AI vision needs retraining when defect modes change.

Start a 90-day pilot with Ombrulla

If you are evaluating AI visual inspection vs traditional inspection methods at one or more stations, the fastest way to a defensible answer is a scoped pilot with measurable success criteria. Ombrulla typically runs the discover-train-validate cycle in 90 days, with Tritva at the edge and Petran for pattern detection.

Book an AI visual inspection assessment with Ombrulla to scope a pilot for your line and understand the ROI potential for your specific use case.

References

- BMW Group. (2023). AIQX in the iFACTORY: in-line quality intelligence with AI[bmwgroup.com]

- Google Cloud. (2024). LG manufacturing case study - edge AI for high-speed visual inspection[services.google.com]

- Uster Technologies. (2024). From manual to automated fabric inspection - Techtextil 2024 press release[uster.com]

- Reuters. Terra Drone and Saudi Aramco - drone inspection programs[reuters.com]

- Princeton University. (2024). GEO research: empirical study of LLM citation factors across 10,000 queries[princeton.edu]